New Pollfish Feature: Sequential Testing, A Variation of Monadic Testing

New Pollfish Feature: Sequential Testing, A Variation of Monadic Testing

The Pollfish A/B testing functionality just keeps getting stronger; we now offer another version of it to fortify all your concept testing needs with the Sequential Testing version. In this second version of A/B testing, researchers can test multiple concepts at once, as opposed to just one with monadic.

In this second iteration, the testing is also done within one group of questions but allows researchers to test more concepts per respondent. This grants researchers added flexibility and efficiency, as only one survey can test multiple concepts.

This version is available for all accounts on the Pollfish platform.

This article explains sequential testing, how to use it in the Pollfish platform and the three versions Pollfish offers.

Understanding Sequential Testing

Sequential testing affects the cost of the survey by way of the following calculation:

The calculation cost formula:

(the # of questions included in the A/B test) X (# of concepts presented to the respondent)

Example:

Questions in the A/B test: 12

Number of concepts presented: 4

12 X 4 = 48

As such, in a basic plan, the CPI will be for $4 (46-50 questions) )

Question Distribution

In this version of the A/B test, concepts have a specific distribution: all possible combinations are derived from the concepts that are selected to be shown. This way, each concept is evenly distributed and presented to the respondents at equal times in the first position.

This reduces any bias that may occur from serving a concept always at the first position of a combination.

Example:

Sequential x 2 selected concepts per respondent out of 3 concepts (A, B,C)

A sampling pool of 300 respondents

We have the following combinations of 2 out of the 3 concepts:

AB, BC, AC

This means that each concept will be seen by more participants than it would in the monadic version. For the example of 300 respondents, each concept will be seen by 200 respondents or a total of 600 views for all concepts.

The distribution should take place evenly for all combinations (300 respondents / 3 combinations = 100 responses/combination)

AB or BA x 100

BC or CB x 100

AC or CA x 100

Results and Exports

The results and exports will be presented as they are in the monadic testing version. However, the difference lies in that each concept will have accrued more views and responses than it would have in the monadic version.

This is understandable, given that sequential testing involves implementing more concepts, therefore yielding more responses and combinations.

What You’ll Find in the Charts of Sequential Testing

Each question type included in a sequential test (and a monadic test) will be accompanied by a chart.

A bar chart contains columns as concepts or answers, depending on the view you select. The data from the table is used for the charts.

On the top right-hand corner of the questions you applied sequential testing to, you can check results in two ways. Just click on the “View” dropdown and choose between by concept and by results, where applicable.

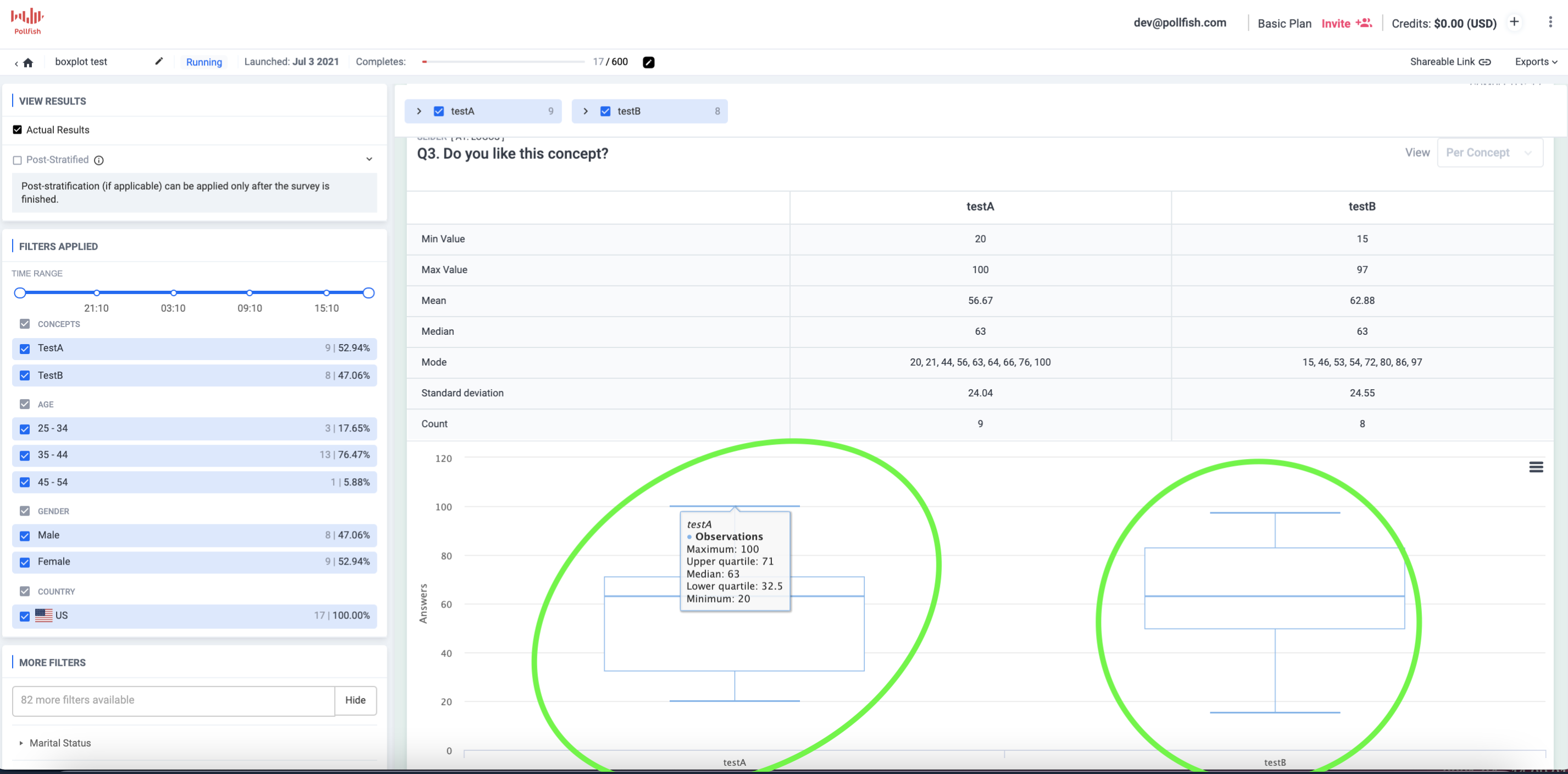

Slider and numeric open-ended questions are presented with a box plot diagram, per concept.

A boxplot is a standardized way of displaying the distribution of data based on a five-number summary (“minimum”, first quartile (Q1), median, third quartile (Q3), and “maximum”). It tells you about your outliers and what their values are.

It can also tell you if your data is symmetrical, how tightly your data is grouped, and if and how your data is skewed.

Box plots are presented on the results page, as this image shows:

The Benefits of a Sequential Test

The main benefit of a sequential testing approach is the improved efficiency of the test. This is because, in sequential testing, each respondent views either all potential concepts or a limited number of concepts, followed by the same evaluation questions for each.

Viewing more concepts and answering for more concepts in just one survey, is far more efficient than testing individually in a non-A/B test setting.

With both monadic and sequential A/B testing, researchers can incorporate many concepts. The key difference is the mechanism that distributes these concepts. In the monadic version, each respondent views and answers for only one random concept whereas in the sequential, they view more and answer for more concepts.

As such, the sequential version grants researchers an added layer of flexibility in their concept setup.

The sequential approach is data-rich, as the Pollfish platform allows researchers to filter their data in regards to each concept, both by concept and answers. This provides a much more granular display of exactly how each concept was received. Best of all, it is provided in one place, on one page.

How to Set Up a Sequential Test

Setting up a sequential A/B test is a fairly simple task. Here are the steps to set up and use the sequential version:

- For the questionnaire, add an A/B test.

- Under “type,” select the sequential type.

- In the concepts table, add as many concepts as you need.

- You can also add attributes.

- In the number of concepts to be presented per respondent, select how many of them each respondent will view.

- (The number of concepts to be presented to the user cannot be bigger than the number of concepts in the concepts table).

- (The number of concepts to be presented to the user cannot be bigger than the number of concepts in the concepts table).

- Define the questions that will be in the test.

- Proceed to the checkout.

Innovating with Sequential Testing

This version of A/B testing is not just ideal for testing ideas, but for breeding innovation, all from one survey. This is because researchers can use sequential testing questions along with regular questions in one survey.

This allows researchers to test their concepts with each respondent, as this is a version of the monadic test.

So go ahead, use all the concepts in one test as you please, for all your market research campaign needs. Or, stick to just a few.

How to Use the New Carry Forward Feature for an Enhanced Survey Experience

How to Use the New Carry Forward Feature for an Enhanced Survey Experience

As the heart of any survey, the questionnaire must be contrived carefully so that you receive the responses most necessary for your survey research. Creating the questions themselves can be difficult, especially if you choose to create question paths.

Pollfish is thus thrilled to present a new feature to make building the questions a much easier task: Carry Forward. This new attribute provides advanced piping capabilities to optimize your questionnaire experience.

The Purpose of the Carry Forward Feature

As a refresher, piping is a functionality that allows users to place, aka, “pipe,” a part of a question or answer into a subsequent question or answer.

In the Pollfish platform, piping works by taking the answer(s) from the sender question and inserting them to the receiver question.

In the first piping iteration, researchers were able to funnel answer choices from one question to another based on respondents’ selections. The following question would carry forward answers from previously piped answers.

The new Carry Forward feature carries (no pun intended) the function of enriching the question-building experience, as it allows you to pipe questions on more question and answer types, along with other capabilities.

This new feature helps researchers design specific questions that are more relevant to the respondent’s behavior, and more useful to their research.

It functions on both selected and unselected answers. It also can be used with:

- Matrix questions

- Ranking questions

- Single selection questions

- Multiple selection questions

Laying Out the Carry Forward Capabilities

Multiple Selection Questions

Along with carrying forward selected answers, this feature allows researchers to carry forward all the answers that the respondent did not select.

In the case of a multiple selection question, for example, the feature can carry forward the unselected answers into the receiver question.

Due to this, when a responder selects all the answers and proceeds, there will be no answer to carry forward, as there are no remaining unselected answers. For this precise reason, the Pollfish platform has developed a validation which exists as a dialogue box.

This pop-up allows the researcher to know that the Carry Forward feature cannot support this case, as it only works if at least one answer is unselected. This is due to the condition that unselected answers cannot be carried forward if all the answers have been selected.

Advanced Logic

This can be used in tandem with advanced logic, allowing you to augment your survey with multiple layers.

Enabling advanced logic (ADL) can trigger questions without forwarded answers. For example, when Carry Forward is enabled but a respondent skipped the sender question, the respondent will then be routed to a question without Carry Forward answers

Pollfish has also added front end validation that disables the researchers from proceeding with the previous structure.

Sender questions with either the “None of the above” or “Other” option must be structured correctly, that is, with multiple selection questions. If these aren’t added to the proper question, there will be pop-up error messages.

Carry Forward Answers that Contain Media

If the Carry Forward answer type is the same or similar to the source (question) type, such as:

- single to single,

- multiple to multiple,

- single to multiple, etc.,

then the platform will carry forward the media files together with the answers.

In other cases, such as different types between sender & receiver questions, there are certain conditions and rules that dictate how Carry Forward will work.

How to maneuver Carry Forward answers which contain media:

- If the Carry Forward answer type is the same or similar (single, multiple) to the source type ? the media will be carried forward.

- If the Carry Forward answer type doesn’t support media then:

- The text will be carried forward if the source answer contains both text and media.

- Carry Forward will not be supported if the source answer contains only media.

How to Add Carry Forward to Your Questionnaire

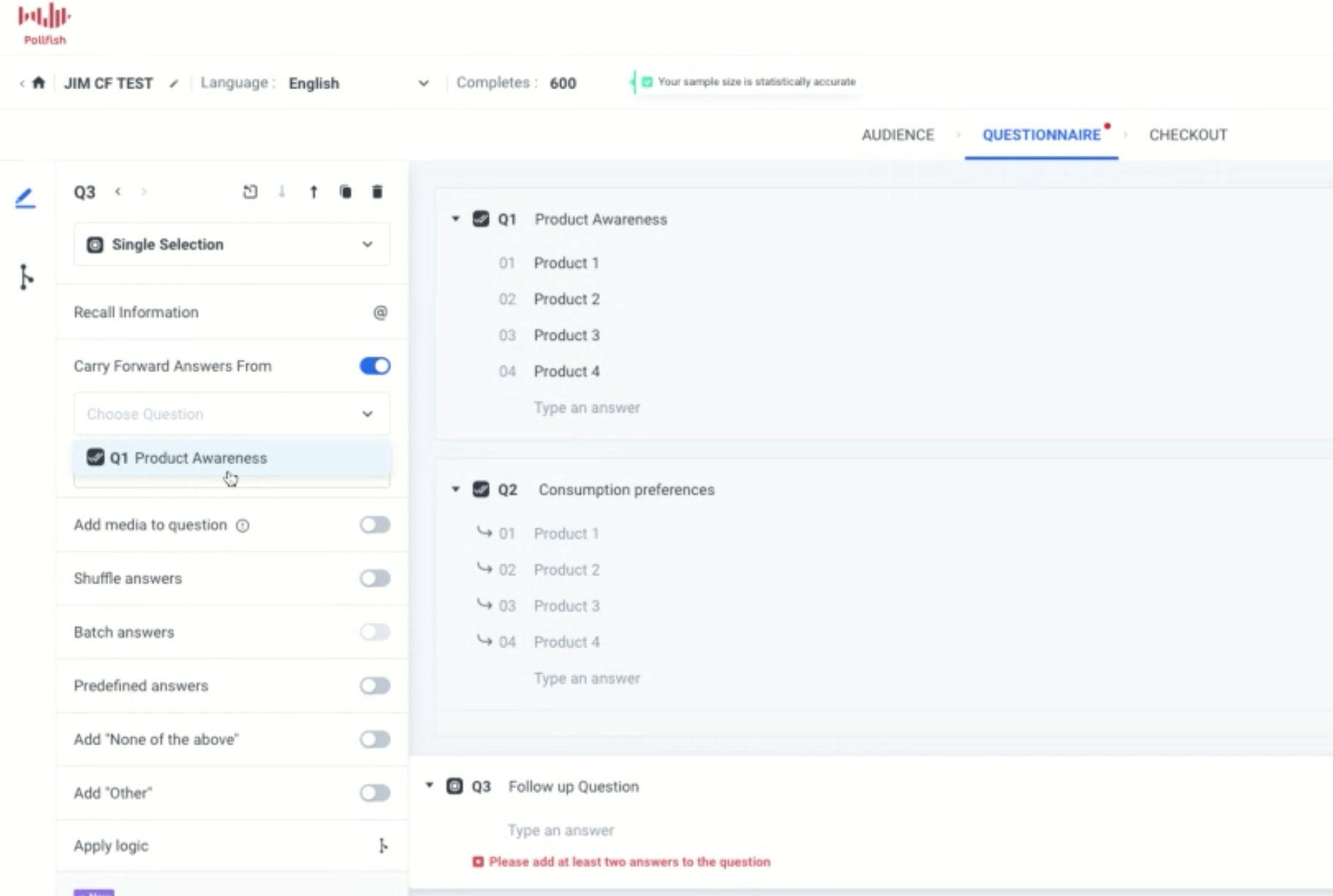

In order to add the Carry Forward feature, you’ll need to enter the questionnaire portion of the survey first (after completing the audience section). You’ll also need to have your questions and answers in mind.

You can add Carry Forward when you begin the questionnaire, as you’ll need at least two questions to use this feature, the sender and receiver question. You can also implement it to an existing questionnaire.

- Find the Carry Forward option at the left panel of the questionnaire.

- Find a sender and a receiver question you wish to apply the CF feature to. This can be in any order. For example, you can use Question 1 as the sender question and Question 2 as the receiver question.

- Enable this via the receiver question and select “Carry Forward” and then the selected or unselected answers from a previous question (the sender question).

What Carry Forward Supports Vs. What It Does Not Support

There are certain conditions that need to be met in order to apply the Carry Forward function. There are certain circumstances in which your questions will not be able to implement Carry Forward.

What it supports:

- Carry Forward can be used with single/ multiple/ ranking/ matrix questions when they are designed as receiver questions.

- When you carry forward a matrix question, there’s an additional option to narrow the choices based on selected columns, unselected columns, rows for selected columns, rows for unselected columns, and columns for specific rows.

- It is supported by single, multiple, open-ended, numeric, ranking, matrix, slider and OE when they are set up as sender questions.

- The researcher can carry forward all the questions that the respondent didn’t select.

- There is simultaneous support of advanced logic and Carry Forward.

- It supports Order/ Shuffle answers for funneling questions.

What it doesn’t support:

- Carry Forward cannot be used with description questions, Net Promoter Score (NPS) surveys and visual ratings surveys.

- It does not support screening questions and therefore cannot be used in them.

- It does not support the option of “Group and Randomize.”

Note: Closing off, you should know that responses that are carried forward will be treated the same as other answer choices on the results page.

We suggest you preview your survey design before submitting the survey itself. Try it out!