Qualitative vs Quantitative Survey Questions

Quantitative vs Qualitative survey questions

Want a quick summary? Check out the infographic!

Research is developed using quantitative and qualitative research methods to gain a complete understanding of the target audience’s needs, challenges, wants, willingness to take action, and more. However, the right time to use either method (or use both together) can vary depending on your research goals and needs.

Difference between Quantitative and Qualitative Research

Quantitative research is about collecting information that can be expressed numerically. Researchers often use it to correlate data about specific demographics, such as Gen Z being more likely to focus on their finances than Millennials. Quantitative research is usually conducted through surveys or web analytics, often including large volumes of people to ensure trends are statistically representative.

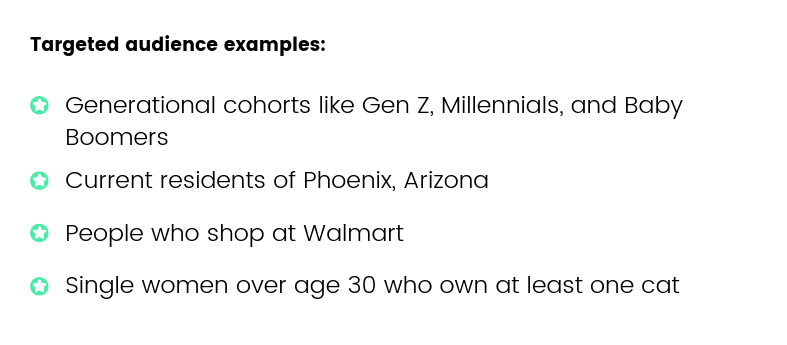

Even when the survey audience is very large, quantitative research can be targeted towards a specific audience, usually determined by demographic information such as age, gender, geographic location.

Qualitative research focuses on personalized behavior, such as habits or motivations behind their decisions. This can be gathered through contextual inquiries or interviews to learn more about feelings, attitudes, and habits that are harder to quantify but offer important additional context to support statistical data.

When quantitative and qualitative research are paired, a complete set of data can be gathered about the target audience’s demographics, experience, attitudes, behaviors, wants and needs.

Benefits of Quantitative Survey Questions

Quantitative survey questions are an excellent starting point in market research, allowing a researcher to “take the temperature” of a population to ensure there is a want or need for a product or service before investing in expensive qualitative research.

Reaching bigger, broader audiences

Quantitative survey questions are best for gathering broad insights and developing basic profiles, validating assumptions about an unknown (or little known) audience.

Mobile survey compatibility

Mobile survey environments are especially effective when closed-ended quantitative survey questions are used, as they allow for the optimal respondent experience.

Statistical accuracy

Quantitative surveys are ideal when working with a control group or when there is a need to get survey responses from a statistical representation of a population. They can be deployed broadly and results weighted for statistical accuracy after the survey is complete.

Benefits of Qualitative Survey Questions

Qualitative survey questions aim to gather data that is not easily quantified such as attitudes, habits, and challenges. They are often used in an interview-style setting to observe behavioral cues that may help direct the questions.

Gaining context

Qualitative survey questions tend to be open-ended and aim to gather contextual information about particular sets of data, often focused on the “why” or “how” reasoning behind a respondent’s answer.

Unexpected answers

The open-ended nature of qualitative survey questions opens up the possibility to discover solutions that may not have been presented in a traditional quantitative survey. Allowing respondents to express themselves freely may reveal new paths to explore further.

Examples of Quantitative Survey Questions

Quantitative survey questions are used to gain information about frequency, likelihood, ratings, pricing, and more. They often include Likert scales and other survey question types to engage respondents throughout the questionnaire.

How many times did you use the pool at our hotel during your stay?

- None

- Once

- 2-3 times

- 4 or more times

How likely are you to recommend this service to a friend?

- Very likely

- Somewhat likely

- Neutral

- Somewhat unlikely

- Very unlikely

Please select your answer to the following statement: “It’s important to contribute to a retirement plan."

- Strongly agree

- Somewhat agree

- Neutral

- Somewhat disagree

- Strongly disagree

Examples of Qualitative Survey Questions

Qualitative survey questions aim to extract information that is not easily quantifiable such as feelings, behaviors, and motivations for making a choice. By asking open-ended questions, and following up with “why?”, respondents are given the freedom to express what led them to these decisions. A technique called the Five Whys is commonly used to determine cause-and-effect correlation. Some examples of qualitative survey questions are:

How would you improve your experience?

Describe the last time that you purchased an item online.

Why did you choose to take public transportation to the airport?

When you should use Quantitative and Qualitative Survey Questions

Whether or not you should use quantitative or qualitative survey questions depends on your research goals. Most often, both kinds are needed during different phases of a research project to create a complete picture of a market need, user-base, or persona.

When to use quantitative survey questions

- Initial research. Because quantitative research is typically less expensive or time-intensive than qualitative, it’s always best to begin with quantitative surveys using the best survey platform for market research. These can help ensure a research project is defined for the right target audience before investing in qualitative insights.

- Statistical data. Statistically accurate data, such as that which can be mapped to the census, can be collected through quantitative survey questions. This is ideal for ensuring an accurate sample in polling and national surveys.

- Broad insights. Quantitative survey questions are ideal for gaining a 10,000-foot view of a market to determine needs, wants, and desire for a product or service based on demographic data that will help shape product development or marketing campaigns.

- Quantifiable behaviors. Behavior such as how often a person visited a website page, how likely they are to purchase an item, or how much they are willing to pay for a product or service are all behavioral insights than can be gathered through quantitative survey questions.

- Mobile survey environments. Data quality can be impacted by the survey distribution method. Because mobile devices are hand-held and mobile audiences are on the go, quantitative survey questions that offer limited answer choices and quick responses tend to yield better data quality than open-ended responses that involve typing and more concentration.

When to use qualitative survey questions

- Gain context about quantifiable data. Research that begins with quantitative data might reveal an unexpected trend that requires further inquiry among a certain group.

- Understand hard-to-quantify behaviors. Thoughts, opinions, beliefs, motivations, challenges, and goals can be uncovered through qualitative research questions.

- Persona development. Personas are tools used by designers, marketers, and other disciplines to create and sell products to people based on specific motivations and interests. While these often include demographic information based on quantitative research, challenges and needs are uncovered through qualitative methods.

- Conversational environments. Focus groups and interviews are ideal places to conduct qualitative research. Disciplines like psychology and user experience research rely heavily on qualitative questions to uncover motivations and reasoning behind certain behaviors.

It is ideal to use a mix of both quantitative and qualitative methods to supplement gaps in data. These methods can be iterative and conducted at different points throughout a research project to follow up and verify different insights gathered from either method. Using both quantitative and qualitative survey questions, supported by the best market research tools, is the best way to holistically understand audience segments.

Frequently asked questions

Are quantitative survey questions good for market research?

Quantitative survey questions are an excellent starting point in market research, allowing a researcher to determine if there is a want or need for a product or service before investing in expensive qualitative research.

What are quantitative survey questions?

Quantitative survey questions are used to gain information about frequency, likelihood, ratings, pricing, and more. They often include Likert scales and other survey question types to engage respondents throughout the questionnaire.

What are qualitative survey questions?

Qualitative survey questions gather data that is not easily quantified such as attitudes, habits, and challenges. They are often used in an interview-style setting to observe behavioral cues that may help direct the questions.

Gaining context

Qualitative survey questions tend to be open-ended and aim to gather contextual information about particular sets of data, often focused on the “why” or “how” reasoning behind a respondent’s answer.

Unexpected answers

How do qualitative and quantitative questions differ?

Quantitative survey questions are used in initial research, defining a research project for the right target audience. Qualitative questions are often open-ended and help answer "why” and gain context about quantifiable data and understand hard-to-quantify behaviors.

Can quantitative research be used towards a specific audience when the survey audience is large?

Even when the survey audience is very large, quantitative research can be targeted towards a specific audience, usually determined by demographic information such as age, gender, geographic location.

Frequently asked questions

Are quantitative survey questions good for market research?

Quantitative survey questions are an excellent starting point in market research, allowing a researcher to determine if there is a want or need for a product or service before investing in expensive qualitative research.

What are quantitative survey questions?

Quantitative survey questions are used to gain information about frequency, likelihood, ratings, pricing, and more. They often include Likert scales and other survey question types to engage respondents throughout the questionnaire.

What are qualitative survey questions?

Qualitative survey questions gather data that is not easily quantified such as attitudes, habits, and challenges. They are often used in an interview-style setting to observe behavioral cues that may help direct the questions.

Gaining context

Qualitative survey questions tend to be open-ended and aim to gather contextual information about particular sets of data, often focused on the “why” or “how” reasoning behind a respondent’s answer.

Unexpected answers

How do qualitative and quantitative questions differ?

Quantitative survey questions are used in initial research, defining a research project for the right target audience. Qualitative questions are often open-ended and help answer "why” and gain context about quantifiable data and understand hard-to-quantify behaviors.

Can quantitative research be used towards a specific audience when the survey audience is large?

Even when the survey audience is very large, quantitative research can be targeted towards a specific audience, usually determined by demographic information such as age, gender, geographic location.

How to use screening questions to reach your target audience

How to use screening questions to reach your target audience

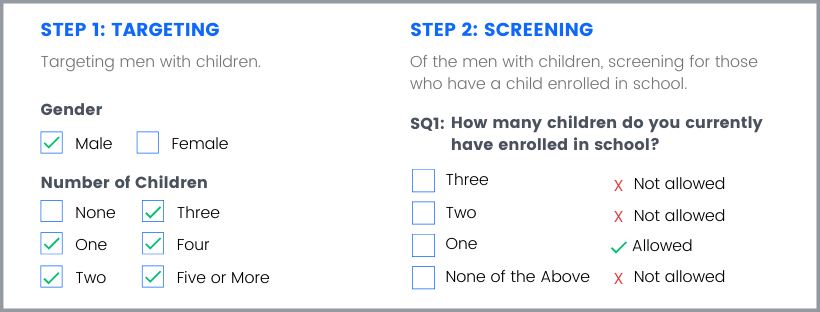

A screening question is a powerful type of survey question that can be used to narrowly target an audience based on behaviors, interests, or attitudes that aren’t available in the general demographic screening criteria.

You can connect precedent selections (even answers in screening questions) with current answers as well as redirect respondents through specific paths curated for their collected demographic characteristics (except age).

Unlike the rest of the questionnaire, these are set up at the same time as other demographic targeting questions. While things like age, gender, and location can be pre-selected in audience targeting, screeners allow respondents to self-identify with specific characteristics or behaviors, and are best used to filter for a qualified audience at the beginning of the survey.

Pollfish now supports up to 6 screeners in the elite plan.

Logic access to demographic and screener answers

If you seek to apply logic to questions targeting specific personas, you can do so in your surveys. You'll need to create a survey in which respondents view and select a product, such as a clothing item. As such, you would need to gather their gender, fit, favorite brand and the type of clothing article they prefer.

How to apply logic to questions targeting specific personas?

- Create a screening question to add the answer in the logic.

- For example, add only the respondents that like Adidas or NIke.

- Add the answer of the screener to the dropdown of the question's prompt.

- Then, you can present respondents who selected a particular answer, such as “training”, with specific curated questions based on their gender and their screener answer.

- Target specific segments of your population, such as female Nike lovers who prefer training shoes, male Nike lovers who prefer training shoes, male Adidas lovers who prefer training shoes, etc.

- Direct all respondents to get an NPS question for their screening questions' selected brand.

How to set up logic rules with demographic rules and SQ answer rules?

- Go to your screening questions.

- Apply 4 similar logic rules to Q1 for presenting respondents with specific questions, such as Q2, Q3, Q4, Q5, based on their selections to the first question.

- Choose your respondents, such as male/female Adidas fans who prefer training shoes.

Best practices for writing screening questions

Like all good survey questions, screening questions should be clear, concise, and unbiased. However, these have a few challenges specific to their question type.

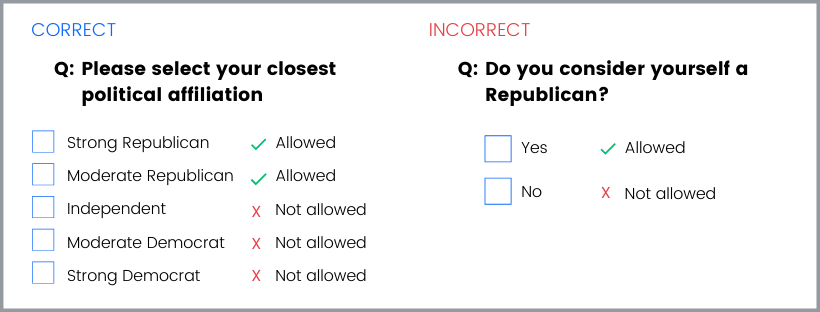

Avoid “yes” or “no” answer choices

While it can be tempting to build survey screening in a “yes or no” format, this creates bias within the question. Respondents are more likely to choose a response that is positive or that will obviously allow them to complete a survey. It’s best practice to create questions with multiple answer choices where it is not clear which is the desired response. This encourages respondents to answer honestly, rather than to choose something that they think will move them forward in the process, and you will end up with a more qualified pool of respondents.

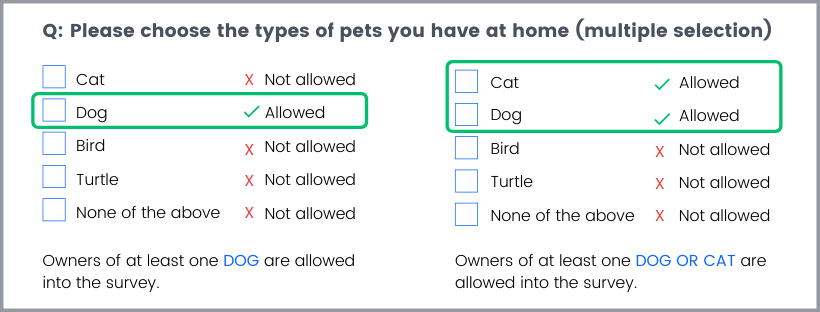

Use question types correctly

Screening questions can be single or multiple-selection. It’s important to know that answers that are “allowed” mean that respondents who select them will be able to participate in the survey if they choose any of those responses. In the “dog owner” example, users may select ownership of more than one pet, but will not be screened in (allowed to take the survey) unless at least one of those pets is a dog. If “cat” were also allowed, then any respondent who chose “cat” or “dog” along with a combination of other pets would be screened in.

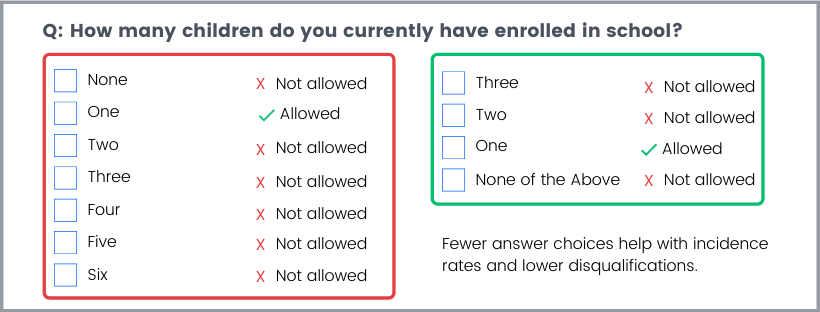

Limit answer choices

If you’re screening for a very specific answer, don’t provide many additional options that will be screened out. Disqualifying answers is how the incidence rate is determined, and a low incidence rate suggests a narrower, harder-to-reach audience. Many survey tools charge more for lower incidence rates, as this audience will be harder for them to provide. (Pollfish doesn’t charge a premium for incidence rate, however, if the incidence rate falls below a certain percentage, the survey will be stopped automatically and adjustments will need to be made).

Single and multiple selection answer qualifications

We now offer more flexibility for qualifying and disqualifying respondents in the screener. You can qualify and disqualify respondents through both single and multiple selection questions, based on the answers. There are three options that grant you this flexibility:

Disqualified: The respondent cannot take the survey.

Qualified: The respondent can proceed to the next screening question, or if it's the final one, to the survey.

Disqualified unless it's accompanied with at least 1 qualified: The respondent's disqualified answer will render them disqualified, unless, they're in a multiple-selection question and also choose a qualified answer. Then, they can proceed to the next screening question, or if it's the final one, to the survey.

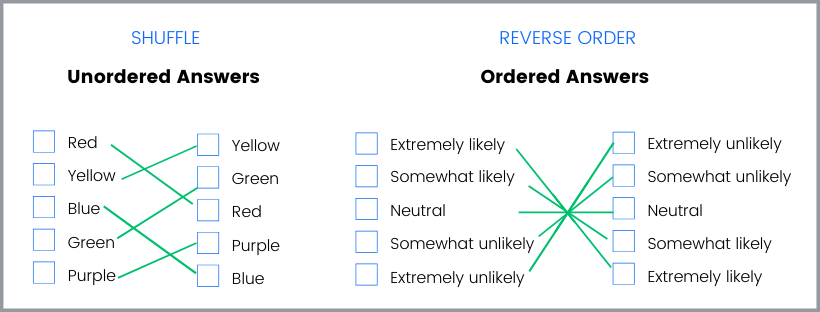

Remember to shuffle answers

Remember to shuffle answers

Like regular best practices for writing good survey questions, screening questions should be shuffled when they offer an unordered set of answers to select from. If the answer choices are ordered, such as those presented in a Likert Scale, reverse the order to provide some randomization, but maintain the order so as not to confuse respondents.

Don’t overuse

Screening questions are powerful when used correctly, and are a great way to narrow in on behavioral attributes that can’t be achieved through regular targeting. However, when too many screening questions are applied, the incidence rate drops, respondents can become confused, and ultimately results will suffer as the audience becomes less representative of a total population. Try to use as few screening questions as possible to maximize your survey’s reach, ideally fewer than 3 screening questions.

Don’t use them if you don’t need to

Screening questions are to be used as an additional layer of targeting but should not be used instead of the regular targeting parameters. Demographic targeting filters are more easily segmented and controlled than self-reported behaviors in screening questions, and allow a broader audience to reach with your survey. Make sure you check all of the available targeting filters on a survey and use them first, then add a screening question (only if necessary) to supplement the targeting criteria.

Benefits of using screening questions

Screening questions provide a number of benefits. When designed properly, survey screening can reduce overall cost by eliminating respondents from the survey early on who do not fit the criteria. They’re especially great for businesses looking to reduce cost on research overall by limiting the amount of unusable data.

The most common uses of screening questions are to identify populations of interests that:

- Share a similar opinion

- Behave in specific ways

- Have similar experiences

Brands, agencies, and other businesses commonly use screening questions to identify audiences that are loyal to competitors, desired behaviors (such as frequently purchasing a type of product), or to survey their current target audience on new features, packaging, or products.

To learn how to use screening questions for the most effective targeting on the Pollfish platform, you can check out our expert tips from the customer experience team.

How to find survey respondents

How to find survey respondents

You have questions that need answers, all you need now is an audience to complete your survey. But how do you find survey respondents? And how do you make sure they are a good match for the questions you will be asking?

We’ll dive into the pros and cons of some of the most common ways of finding survey respondents that should be considered before choosing the best approach for you.

Harness the power of social media

Most people have one form or another of staying connected digitally, whether it’s Facebook, LinkedIn, Reddit, or others. Consider that each of these places offers a built-in audience that you can survey.

Pros

- Social media audiences are free to reach out to.

- There’s no limit to how many potential respondents you could have.

- Some platforms, like Instagram, offer quick polls that may work for quick, simplistic surveys.

Cons

- Social media was not designed for survey distribution, and your network is not large or randomized enough to serve as an accurate sample audience.

- Targeting is extremely limited. Even if you join a group that is specific to your survey (for example, if you want to reach parents of toddlers, you may join a group for mothers sharing parenting tips), you will run into audience bias, as those who are actively supporting a brand or idea on social media tend to be more vocal than the average member of the group.

- No control. You won’t be able to verify information about who takes your survey, or how many times. If your survey is a link, respondents could share or email it outside of your desired target market.

- Limited functionality. Even using proprietary survey abilities, like Instagram’s polling feature, you're limited in what you can ask. The platform is not complex enough for a full questionnaire or nuanced reporting.

Do-it-yourself with survey research tools

DIY research tools are one of the easiest and most affordable ways to reach respondents at scale. Most come with the option to buy responses from an audience as part of the package, and there are many different survey tools to choose from based on your needs.

Pros

- DIY research tools find participants and manage the distribution for you, which takes all the work of finding respondents off your plate.

- Most of the tools available are incredibly affordable. Services like Pollfish begin at just $1 per completed response and results can come in less than a day.

- Targeting is MUCH better than any comparable option for this price point. Survey tools vet respondents to verify demographic information, ensuring that surveys are matched to the right audience.

- There are many more question types, meaning you can gather data from a variety of different angles. Customize your questionnaire to specific respondents using branching or add media to offer better context.

Cons

- If you’re not well-versed in research, some survey tools can be harder to use. Choose options that offer live support when possible, to help you through tough spots.

- Although many DIY survey tools are affordable, they do come with a price tag. Make sure you know how much your survey will cost before you launch, and don’t get tricked by survey tools that charge for the audience and the questionnaire builder separately.

- Delivery method is important with DIY surveys—make sure your tool lets you meet respondents where they are: on their smartphones. Mobile survey delivery ensures broader reach and faster results than other methods.

Buy a list of email addresses

Buying a list is an older, email-based approach to conducting surveys. You can buy a list of email addresses from a company that specializes in building these based on psychographic or demographic criteria and manage the distribution of it yourself.

Pros

- Email lists for B2B audiences can be targeted to specific titles and companies you’re looking to reach.

Cons

- Email lists are expensive, slow, and require a lot of collaboration with the company you bought them from to distribute the survey.

- That B2B audience you paid a premium for? They’re getting too many emails as it is. The odds of them responding to your survey are extremely low.

- If you’re reaching an audience in Europe, list-purchasing will violate the consent rules of GDPR, which could result in significant fines.

Pay-per-click

You can pay for distribution methods to get your survey in front of more people. Google AdWords or sponsoring your survey on a social media post could broaden your audience digitally.

Pros

- You will broaden and randomize the reach of your survey beyond what you would be capable of using social media or emailing a list.

- You have more control over targeting than you would with social media or an email list (although not more than DIY Survey tools)

Cons

- You’ll get a lot of impressions, but PPC cannot promise click-through rates.

- Expect to pay a lot for clicks when you get them, whether it’s the audience you want. PPC cannot guarantee that the audience you targeted is the only people who will try to complete the survey.

Hire an agency

If all else fails, you can always hire a market research agency. MRAs are made up of professional researchers who can hold your hand through the process, which may be necessary for especially complex projects.

Pros

- You can be completely hands-off. The agency will find survey respondents and manage the entire process for you.

- You’ll have the expertise of professional researchers leading your project, which might be worth the price tag for an especially complex research initiative.

- Your results will be packaged nicely. Instead of allowing you to be overwhelmed by analytics, your research team will present a report of the findings.

Cons

- Like all agencies, MRAs come with a heavy price tag. Expect to pay a premium for high-touch service.

- MRAs are slower than other options. Depending on the urgency of your project, this may not be feasible, especially when instant insights are available.

- Expect a contract with your agency and be prepared to take on the cost and risk of signing on for a full project.

Survey respondents are everywhere if you know where to look. Remember to always consider not only the size of the audience but the ability to reach a specific segment that is representative of the target population for best results.

Pollfish reaches an audience of over 670 million real consumers engaged on their mobile devices, with advanced targeting and distribution all in one. We’ll help you find a global audience for your survey and get professional support along the way.

How to write good survey questions

How to write good survey questions

Good survey questions lead to good data. But what makes a survey question "good" and when is the right time to use specific types?

At Pollfish, we have distributed tens of thousands of surveys and manually review them all, so we know a thing or two about writing good survey questions. Our experts have compiled a list of the essentials below into a sort-of questionnaire template to make sure you have what you need to create great surveys and get the highest-quality data.

1. Have a goal in mind.

Consider what you’re trying to learn by conducting this survey. Do you have an idea that you want to validate, or are you hoping that you can disprove an assumption under which you have been operating? Surveys work best when they focus on one specific goal. When building the questionnaire for your survey, it is important to offer questions that support your goal.

2. Eliminate Jargon.

Just because a concept is clear to you doesn’t mean your target audience is always on the same page. A well-designed questionnaire contains good survey questions to be sure. But they also use plain language (no jargon) to explain concepts or acronyms that customers may be unfamiliar with and offer an opt-out for those who are unsure. Don’t be afraid to use more than one question or offer an example to ensure clarity on complex information in your questionnaire template— a confused audience leads to frustration and low-quality responses.

3. Make answer choices clear and distinct.

When multiple-choice answers are presented, the respondent must make a selection. If these responses overlap or are confusing for the respondent, the quality of the data decreases because they aren’t sure what is being asked of them. Make sure answers are distinct and specific whenever possible so the respondent can confidently choose the best answer.

4. Give users an “other” option.

Make sure that, in a multiple-choice sequence, you’ve given respondents the chance to opt out of the question if it doesn’t apply to them or if none of their answers fit. Provide an option like “no opinion,” “neutral,” or “none of the above.” You can also offer the option to select “other” and provide an open-ended response that can give you more context.

5. Avoid “yes/ no” screening questions.

Screening questions help you connect with a qualified audience at the beginning of the survey. When respondents select a qualified response, they will enter the rest of the survey. However, people are biased toward choosing “yes” or a positive response when presented with a yes/ no question, even if their real opinion is more neutral. To reduce bias, provide a list of answer choices with no indication that one is preferred over the others.

6. Don’t ask two questions at once.

Each question should focus on obtaining a specific piece of information. When you ask two questions at once using “and” or “or,” you’re introducing another question, which may have a different answer. This will have one of two results: either you’ll confuse your respondents, who are forced to choose the right answer to one question; or your respondents will confuse you with their answers. Either way, make sure you write simple survey questions asking for separate pieces of information as separate questions.

7. Use skip logic when applicable.

Skip logic, or branching, allows you to create multiple question paths based on an earlier answer. This means more qualified respondents will be asked to answer more in-depth questions and reduces answers like “don’t know” or “no opinion” later.

8. Use different question types.

Respondents offer better and more thoughtful answers when they are engaged. And that means asking several types of research questions. Use ranking, matrix, open-ended, or multiple-choice questions to stimulate them and keep them interested, especially in a longer questionnaire. Different question types not only keep the respondents engaged (which can increase your completion rates) but can also elicit different responses.

9. Shuffle answer choices for ranking, matrix, and multiple-choice questions.

We are naturally inclined towards the first information we are presented with—the top answer— in a series of answer options. Shuffling the order of the answer choices reduces bias in responses. However, for answers that relate to one another—such as a Likert scale or timeline—it’s helpful to keep them in an order that flows logically to avoid confusion or misreading.

10. Add media or images to provide helpful context.

Media—such as images, video, or audio clips—provides another level of clarity to your survey questions. You can use these either to give added context to the question or offer media as an answer choice.

11. Always keep the audience in mind.

Remember as you are writing the questions to always keep your target audience in mind. The audience can be as broad as the “general population” or as narrow as you need it to be. The important thing is to know who you want to target so you can communicate with them effectively.

Regardless of the types of survey questions you select, questions should be short, clear, and to the point, but also engage the respondent through multi-media and question types. Remember that the less confused your respondents are, the clearer your data will be.

These best practices will help you write good survey questions on any platform, including our own. If you have additional questions specific to Pollfish, check out our resource center or reach out to our Support team to learn more.

What is a Rating Question? Definition, Examples & More

What is a rating question?

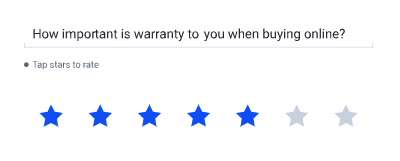

Rating questions (See also: slider questions) allow participants to weigh, or assign numerical values to answers via a graphical interface —using a simple 1-5 star rating system, or 0-100 scale where a higher number is a better score.

Rating Questions are particularly effective on mobile, due to the graphic user interface, and simple tap-to-enter, or drag-and-drop functionality.

Rating questions can be used to evaluate a variety of topics or stimuli, including statements, images, or videos.

Rating questions can be used to evaluate a variety of topics or stimuli, including statements, images, or videos.

Adding rating questions to a mobile survey

Since most mobile survey users prefer simple gestures that can be performed with one hand, these questions are favorites among respondents for their ease of use because of their tapping or drag-and-drop capabilities.

The information you gain is on one parameter—their preference for a particular piece of content.

You can use a series of survey rating questions to understand preferences for different topics and then compare the results.

You may also consider including different types of survey questions to avoid participants falling into a response pattern.

Rating Questions Examples

Here are the rating questions examples you can use when you create a survey with Pollfish.

Star Ratings

Select a rating out of 5 stars, as seen on Yelp or Roger Ebert. This is the simplest form of rating question and provides the cleanest data set. But the nature of the question reduces the depth of response you can get from it.

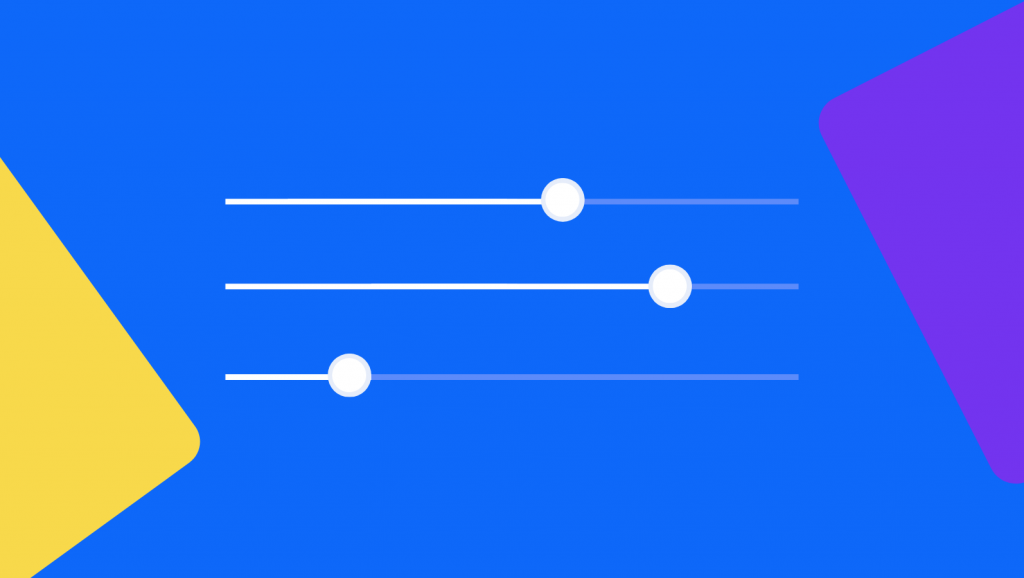

Slider Ratings

A scale of 1-100 will allow users to rate performance. This question type provides a wider distribution of responses.

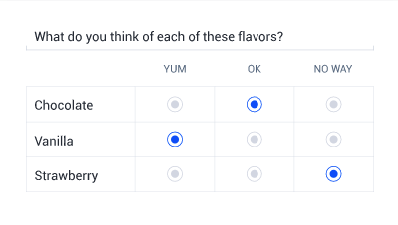

Single-Selection Matrix Question

Rate your choices on a matrix of possible options. By reducing to one response per line, you can ask for a rating on multiple features or products inside the same question.

Slider questions in mobile surveys

Slider questions in mobile surveys

Slider questions are a form of a survey rating question where the user inputs feedback via a graphical interface.

There are several advantages to using the slider question type on your mobile survey, including:

There are several advantages to using the slider question type on your mobile survey, including:

- They are fun and engaging to users

- They allow for more specificity than a simpler rating scale

- Users are often more inclined to answer truthfully given the flexibility to rate as they see fit

However results from a slider rating can have a wider variety of responses, which can cause challenges in interpreting the overall sentiment of the sample.

Grouping these numerical responses into ranges based on the ratings provided can be helpful in this interpretation, assuming your survey provider supports answer grouping with this type of survey question.

Single-selection question design

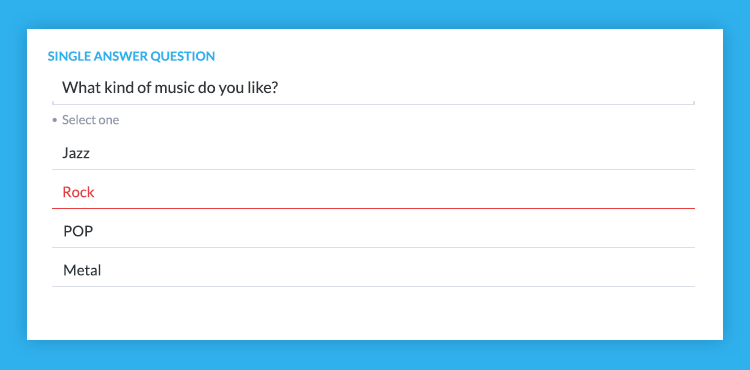

Single-selection question design

Single-Selection, or Single Answer Questions are questions where a user is asked to pick only one answer from a pre-determined set of responses of two or more options. They are one of the most common survey question types and are effective in determining a user’s primary preference among a set of choices.

The most common single-selection questions are multiple choice questions, where users are given a list of responses and asked to pick the best answer.

Why are single-selection questions so popular?

- They close the responses—you provide answers that a user may not have thought of.

- It's easy to analyze their results quantitatively

- They're easy for participants to respond to on mobile devices and stay engaged.

Single-selection and multiple choice questions: Examples & Best Practices

How to write multiple choice questions

Traditional multiple choice questions (like you might find on the SATs) contain 3 parts: the stem (the question or incomplete statement), the correct answer and the distractors.

In mobile market research surveys, multiple choice questions can be used to gauge user opinion.

Multiple choice survey questions are popular for several reasons:

- Everyone has seen them before so there is no confusion as to what to do.

- They are easily consumed on mobile.

- They make reporting very easy.

Just like with traditional multiple choice questions, you need a stem. But there is, of course, no correct answer, just opinions, so all answers hold equal weight.

When creating a stem, create a question with a single-select answer. You want to ensure that there is no ambiguity where someone could select multiple responses, as this creates confusion and results in incomplete results. You can also write an incomplete or "fill-in-the-blank" statement and have the user select the blank.

There are many different kinds of multiple choice questions, but single-selection questions provide straightforward responses that are easy to work with once data collection is complete.

Yes/No answers may be polarizing

Provide limited insights depending on the question. You can offer more than two answer options, including:

A) First Answer

B) Second Answer

C) Third Answer

D) Fourth Answer

Don’t offer too many choices

This will force a user to scroll or take time to compare an answer at the bottom of the list with one from the top—you want the most natural response possible without a user “speed-selecting” the first answer choice as a result of researcher or participant bias.

Scale questions work well on mobile

Another popular type of single-selection question is a Likert scale question. These questions allow users to select from a scale of responses, indicating their feelings towards customer satisfaction. These question types tend to provide the best experience on mobile, for example:

Strongly Agree

Agree

Neutral

Disagree

Strongly Disagree

Single numerical values vs number ranges

You don't have to stick to single numbers. You can ask users to choose between numerical ranges. Just be sure not to “overlap” answers, as this can confuse the respondent:

Considerations for designing a mobile survey

Answers to single answer questions should be shuffled, or randomized where appropriate to remove some of the survey bias and ensure that the participant is selecting the best choice. Researchers tend to write answers in the order they expect them to be answered, and respondents tend to choose the first answer they are presented with.

With Pollfish, there is also the ability to anchor the last answer, by selecting “Shuffle but keep the last one fixed”.

There are times when you do not want to shuffle answers, such as when asking for a numerical response.

You may want to employ a technique of asking single-selection questions in a slightly different way in your survey, to validate that the responses are consistent, reliable, and credible.

If you already have a list of answer choices, batch answers can speed your survey design and avoid the hassle of typing individual answers one at a time. If you have the list on separate lines in an excel sheet, word doc, or similar, simply select “Add batch answers” and cut-and-paste your choices from your source document.

Advanced Tip: If you want to copy the answers from a previous question you created, select “Add batch answers” there and the pop-up window will have your choices available to copy and paste.

wp-faq-schema]

Rank order survey questions (and how to use them)

Rank order survey questions (and how to use them)

Rank order survey questions (also called Ranking questions) enable participants to compare items and rank them according to their preference.

The Benefit of Rank Order Survey Questions

The benefit of this over other survey question types like multiple answer survey questions or rating questions is that you gain critical data about a participant's preference of one item as compared to another—versus choosing multiple answers without ranking them or rating on an individual basis.

Ranking Survey Questions vs Rating Survey Questions

Ranking survey questions differ from rating questions in that participants are forced into providing a preference for one item over another—whereas in a rating question, two items may both compare similarly. For example:

“Rank your preference on the hotel amenities during your stay”

- Pool

- Gym

- Bar

- Fast Check-in

Will provide different information than

“On a scale of 1-5, please rate the following hotel amenities”

- Pool

- Gym

- Bar

- Restaurant

In the latter, all categories may receive a “5” – which will tell you how satisfied a guest is, whereas survey ranking questions may provide insight on what the most appealing amenity is.

How To Write Ranking Survey Questions

Unlike other types of survey questions, which may tell you how much a user enjoyed your product or service, Ranking questions are often used to determine what about the experience was most valuable.

For example, if you ran a Mexican restaurant, you may want to know the most popular Mexican dish among Mexican food fans in your area. But let's say you are going to invest in certain parts of your business--new menu, top-shelf ingredients, renovation. But you only have money to invest the money into three projects. It might be helpful also to know which parts of your business customers already love and which they feel could use some improvement.

Here's where ranked-choice survey questions are a must.

Just a few examples for your Mexican restaurant:

Please rank the following in order of importance

- Great cocktails

- Vegetarian options

- A delicious dessert menu

- A top-shelf tequila list

- Ample parking

- Speedy pick-up service

Please rank your favorite Mexican restaurants in the area

- Sleepy Joes

- Cantina Dos Segundos

- Casa Taqueria

- Rosa Mexicana

- Jose Pistolas

- Chipotle

Best Practices for Ranking Survey Questions

Don't read too much into the lower rankings

Once people have selected their favorites or their least favorites, results can get a little noisy. Most people clearly know what they like and don't like, and may just be selecting in the middle to get the question over with. Make sure to take this into account.

Aim for six items

Six items in a ranking question allows for a full range, without creating imprecise answering that longer lists can create. Six items allows you to determine a top 3 very clearly, or get a high end and a low end, with enough options in between. In our experience, between 6-10 items usually work well.

Shuffle items

If your survey platform allows it, set items to shuffle regularly. This will reduce survey bias. In some cases, the top result may be ranked higher because it is first on the list.

Split longer lists into categories

If you have a very long list of different product features that you want ranked, doing a long ranking list will provide messy data that is hard to use. Try splitting your items into categories, keeping lists short and creating multiple questions. It will increase response rates and give you a better dataset.

Frequently asked questions

What are rank order survey questions?

Rank order survey questions, also called Ranking questions, enable participants to compare items and rank them according to their preference.

What is the benefit of Rank Order Survey Questions?

The benefit of rank order survey questions over other survey question types (multiple answer survey questions, rating questions) is that you gain critical data about a participant's preference of one item as compared to another—versus choosing multiple answers without ranking them or rating on an individual basis.

How can you reduce survey bias in rank order survey questions?

You can reduce survey bias in rank order questions by shuffling them regularly, if your survey platform allows it. This is because, In some cases, the top result may be ranked higher because it is first on the list.

How do rank order survey questions differ from other types of survey questions?

Unlike other types of survey questions, which may tell you how much a user enjoyed your product or service, ranking questions are often used to determine what about the experience was most valuable.

Frequently asked questions

What are rank order survey questions?

Rank order survey questions, also called Ranking questions, enable participants to compare items and rank them according to their preference.

What is the benefit of Rank Order Survey Questions?

The benefit of rank order survey questions over other survey question types (multiple answer survey questions, rating questions) is that you gain critical data about a participant's preference of one item as compared to another—versus choosing multiple answers without ranking them or rating on an individual basis.

How can you reduce survey bias in rank order survey questions?

You can reduce survey bias in rank order questions by shuffling them regularly, if your survey platform allows it. This is because, In some cases, the top result may be ranked higher because it is first on the list.

How do rank order survey questions differ from other types of survey questions?

Unlike other types of survey questions, which may tell you how much a user enjoyed your product or service, ranking questions are often used to determine what about the experience was most valuable.

How to run effective consumer surveys

How to run effective consumer surveys

If you want more customers, it is worth listening to the feedback provided by the ones you already have. Discovering and understanding what they think about your product/business/brand is critically important to successfully scale your business.

One way that you can find out how your customers are feeling is through surveys. With consumer surveys, you will be able to gather valuable information about their experience and can adjust accordingly. This will be effective in the long run, as listening to customer feedback will inevitably lead to improving people’s experience and creating a loyal customer base.

Surveys can give you the information to help you improve the experience of existing customers but can also help you decide the best way to reach new customers in the future. By finding out how you are viewed by your customers, you can adjust and adapt to create a better experience for everyone. Here are a few tips for writing the most effective customer feedback survey:

Construct a short, yet compelling invitation

The invitation is often the make-or-break factor in deciding whether the recipient clicks through to and completes the survey. Keep this invitation as brief as possible—but without leaving out key details. Make sure that the recipient knows it is coming from your company and briefly explain what the survey will be about. This will be effective because it will refresh the customers’ minds and increase the chance of getting better results.

Keep it short

No one wants to waste their valuable free time answering hundreds and thousands of questions about your company. So, keep your survey concise without sacrificing your goal. Do this by losing unnecessary questions and by making the questions you use specific and useful.

Optimize for mobile

Over 50 percent of most demographic groups have a smartphone, and this number is only increasing. Since the average person checks their phone up to 150x a day, chances are you are going to connect with your audience a lot sooner than on other platforms. By optimizing for mobile usage, your mobile survey will reach more people, and by extending the reach of your survey, you will also get survey responses faster. There is no better way to connect with consumers in the 21st century than reaching them where they are most accessible: on their mobile devices. In this way, you can reach existing or potential customers and learn what they think within hours of creating your survey.

Offer incentives

Depending on your audience, offer incentives or prizes for completing the survey. Incentives undoubtedly lead to more and faster survey responses, but they also occasionally lead to rushed answers and incomplete submissions. Make sure the incentive correlates with the survey and the targeted audience. If you have crafted a compelling invitation, your survey response rate should increase on its own. However, everyone appreciates added incentives.

At Pollfish, you can create custom consumer surveys to find out exactly what your customers think. For example, you can attach videos if you want consumer feedback on a new advertisement. You can also add targeting if you want to find out what a certain demographic of your customers thinks about certain issues.

Nonprofit Surveys: Fundraising, Volunteer Satisfaction & More

Nonprofit Surveys: Fundraising, Volunteer Satisfaction & More

Are your fundraisers successful? Are your volunteers happy? Did anyone actually like those homemade biscuits last week? A cheap and easy way to get answers to questions like these is through nonprofit surveys. This can help your organization gain a better understanding of people’s experiences, why people donate and how your events could be improved. Ultimately, surveys can give you the data to improve the effectiveness of your organization and increase the awareness of your cause.

Using a large network of audience members as well as specific targeting options, you can reach the respondents you need to get the best responses to a nonprofit survey:

Write a welcoming introduction.

People want to know that their responses will be contributing to something good. Be sure to include an introduction for your survey telling people what their results will be used for and how you will be using them to improve your organization.

Keep your questions short and sweet.

Clarity is key. Stick to the point and ask questions with specific objectives. This way your target demographic won’t zone out answering loads of unnecessary questions, and you’ll have responses to the questions you need answered. It would also be a good idea to hone in on exactly what you want to find out and who should be answering these questions—are you focussing on those who regularly attend fundraisers, or newbies who you want to attract? Be sure to check out some of Pollfish's targeting and screening questions to narrow down who your survey will be sent to and see how large your survey audience is.

Follow up!

The most successful surveys occur over time. What this means is that you will get answers from your respondents over many successive surveys, so be nice to them. They should receive a thank you for taking their time answering your questions, and make sure you're doing this sincerely. People are happy to help a genuine company with a good cause.

We understand that as a nonprofit organization you need tools that are inexpensive and quick. With Pollfish surveys starting from as little as $1 per survey and with results available within hours, we're here to help. We even offer 24/7 communication with our customer support team to help you with anything in regards to your nonprofit survey.

Remember to shuffle answers

Remember to shuffle answers