16 Steps to a Perfect Conjoint Analysis

16 Steps to a Perfect Conjoint Analysis 🛍️

Conjoint analysis can feel like an intimidating labyrinth, but it’s actually one of the most powerful tools for unlocking consumer preferences. Done right, it can help you translate complexities into reliable insights that drive product design, pricing, and positioning decisions.

1. Conjoint Analysis Fundamentals 🧩

Conjoint analysis is the research technique that breaks down product or service choices into key attributes, then systematically varies these attributes to see how each one influences preferences.

By focusing on trade-offs, it mirrors the real-world thought process consumers face when they’re forced to pick one option over another. Traditional survey questions often fall short because they don’t reveal the relative importance of price versus feature sets, or brand name versus convenience. With conjoint, you can untangle those competing demands in a more granular, sophisticated way. The end goal is to measure not just what people want, but how much they’re willing to “give up” to get it.

An example question in a tech brand’s conjoint study might be:

“If you were choosing between two smartphone packages, which would you be most likely to buy?”

Option A: longer battery life, standard camera, premium brand name

Option B: standard battery life, high-end camera, lesser-known brand

2. Zeroing In on Useful Attributes 🔍

Selecting the correct attributes is arguably the single most impactful step you can take in designing a conjoint study. Too many attributes can overwhelm respondents, while too few might overlook critical product aspects that sway decisions. Ensuring these attributes are distinct and mutually exclusive is also essential to minimize confusion and glean accurate weightings. Here is an example of two areas of attributes. The first attribute "type" is doing a better job of mutually exclusivity than "perfume" in this case:

Consider a hypothetical beverage brand comparing packaging type, sugar content, flavor variety, and brand endorsement as separate attributes, rather than mixing them up into one. Each attribute should reflect a meaningful variation—something that genuinely influences consumer choice.

For instance, a coffee brand might ask:

“Which of the following coffee bag designs would grab your attention most?"

Option A: a large, eco-friendly package with a minimalist design

Option B: a compact, travel-friendly package featuring vibrant branding

3. Realistic Attribute Levels 📏

Each attribute must be broken down into levels that mirror actual possibilities in the market. If you’re testing a new streaming subscription, for example, realistic levels for monthly pricing might be $5.99, $9.99, and $14.99 rather than extremes like $0.99 or $59.99. Relevancy is crucial; unrealistic price points or impossible feature sets lead to skewed responses.

This is also where you shape the “menu” of consumer options—like different data storage capacities for a new smartphone or various organic certifications for a new snack product. Make sure the distribution of levels reflects real constraints, so you can interpret results with confidence in real-world scenarios.

An illustrative question could read:

"How would you rather subscribe to our new streaming service?"

Option A: $9.99 per month with no ads

Option B: $5.99 per month with limited ads

4. Balanced Experimental Design ⚖️

The underlying mechanics of a conjoint study require a careful balance of attribute-level combinations. Too many combinations and your respondents will feel like they’re taking an academic exam, too few and you risk missing hidden interactions. Advanced algorithms can help ensure that each level is shown enough times to yield reliable data but not so often as to cause respondent fatigue. The sweet spot often involves advanced design principles like orthogonality and minimal overlap. These design techniques ensure that every attribute pairing yields the maximum amount of information.

For instance, a car manufacturer’s conjoint questionnaire could systematically rotate engine sizes, interior materials, and color options to prevent repeating the same combinations, asking respondents:

“Which vehicle option do you prefer?"

All the while rotating the set of feature-level bundles described above.

5. Competitive Benchmarks 🏇

One valuable aspect of conjoint is its ability to include competitor offerings as realistic points of comparison. By inserting a competitive brand attribute—like “Powered by Apple’s iOS vs. Powered by Google’s Android”—you can see how your audience perceives your brand relative to others in the marketplace.

This approach mimics how real-world consumers choose between competing products on the shelf or in the app store. Competitive benchmarks help you identify where your own brand differentiates or falls short. Without such comparisons, your conjoint analysis might show inflated preference for your product if respondents don’t see a credible competitor’s attributes.

A scenario-based question could ask:

“In choosing a new tablet, considering features like display size and exclusive apps, which would you be more inclined toward picking?

Option A: a brand-new Amazon Fire Tablet

Option B: a similarly priced Apple iPad Mini

6. Post-Conjoint Open Ends 🎧

While conjoint is largely quantitative, incorporating some qualitative questioning can yield richer insights, especially if you want to understand the reasoning behind trade-offs.

Post-conjoint open-ended questions can surface emotional triggers or usage contexts you might not have anticipated. The combination helps you understand not just the “what” but also the “why” behind consumer choices. Hybridizing methods is particularly effective when your product categories are lifestyle-oriented or experience-driven, like tourism or fashion. Even a small dose of qualitative coding can highlight patterns in verbatim responses that your numerical data can’t quite capture alone.

An example post-conjoint follow-up might ask:

“You selected the higher-priced lounge chair with ergonomic support—what features would you consider indispensable in your ideal chair?”

7. Adaptive Conjoint for Complexity 🌐

Adaptive conjoint shifts the specific configurations shown to a respondent based on their answers, preventing the fatigue that can arise when too many attributes are tested. This is especially crucial for complex industries like automotive or enterprise software, where the permutations are endless. By tailoring subsequent questions to a respondent’s earlier preferences, you maintain engagement and collect cleaner data.

The approach leverages computer-based algorithms that pivot in real-time, ensuring no one sees irrelevant or repetitive profiles. This method can be invaluable if your brand wants to test, say, a complex product range of laptops with variations in processor speed, memory, screen size, and connectivity options.

A typical adaptive conjoint question might read:

“Given your previous selection favoring high processor speed, would you choose a 16GB RAM or 32GB RAM laptop if the price difference were $200?”

8. Integrating Emotional Triggers 🤯

Sometimes it’s not just about rational factors like price or performance—emotional resonance can be a decisive factor in consumer decision-making. Incorporating attributes that tap into intangible elements, such as brand prestige or environmental sustainability, can significantly elevate your conjoint analysis. These attributes might be less quantifiable, but they can still be broken into levels—like “Locally sourced materials” vs. “Fair Trade certified.” Emotional triggers can dictate higher willingness-to-pay, even if feature sets are similar. By factoring them in, your conjoint results will better reflect the full spectrum of consumer motivations.

A relevant question for a cosmetics brand might be, “Which of the following would you prefer?"

Option A: a 100% cruelty-free lipstick at $18

Option B: a well-known high-fashion brand’s lipstick at $15?”

9. Running Sensitivity Simulations 🤏

One of the greatest benefits of conjoint data is the ability to run “what-if” scenarios or sensitivity simulations. You can manipulate different attributes—like removing a premium feature, raising the price, or changing the brand name—to see how it affects overall preference share.

This technique allows you to proactively respond to market shifts without having to run a whole new survey. It’s like having a virtual sandbox for your product strategy, where each tweak to an attribute reveals the potential gain or loss in market acceptance. These simulations often prove invaluable when presenting to stakeholders who crave data-driven insights on how each product decision might pan out.

A hypothetical question that paves the way for such simulations could be,

“If our new cereal brand launched at $4.99 with organic grains vs. $3.99 without the organic claim, which would you buy?”

10. Analyzing Price Elasticity 💸

Price elasticity is a critical metric for companies aiming to optimize profit margins without alienating customers. Conjoint is particularly adept at revealing how consumer preference shifts at different price points, giving you a fuller picture of elasticity than a simple “Would you pay $X for this?” question.

By systematically varying price alongside other features, you see the exact trade-off consumers make between cost and value. This helps you avoid the dreaded “race-to-the-bottom” scenario where lowering price leads to brand devaluation. Conversely, you might find that consumers are willing to pay a premium for features you initially underestimated.

One possible question for a fitness wearable brand could be:

“If the smartwatch cost $50 more but included advanced health tracking, would you still choose it over the basic version?”

11. Conjoint with Segmentation 🧬

Conjoint results become even more powerful when layered with robust segmentation data. By dividing respondents into meaningful groups—like brand loyalists, deal-seekers, or tech-savvy early adopters—you can see how attribute preferences vary among segments. This segmentation can direct targeted marketing campaigns or even product variations that cater to each group’s distinct needs.

The synergy of conjoint and segmentation reveals not just what the “average” person wants, but how subgroups prioritize differently. This can make the difference between a one-size-fits-all strategy and a tailored approach that resonates deeply with each consumer segment.

A question that marries these two concepts might ask:

“Between these streaming service bundles, which would you select if you identify as someone who values exclusive content over the lowest price?”

12. Key Driver Analysis 🚀

While conjoint delivers the trade-off metrics, key driver analysis (KDA) can provide the broader picture of how each attribute drives overall satisfaction or likelihood to purchase.

When you combine the data sets, you can see which attributes, when improved, offer the highest lift in product appeal across your target sample. KDA also helps confirm or challenge hypotheses you formed from conjoint alone. Maybe you discover that an attribute with moderate importance in conjoint actually ties to a significant jump in overall brand affinity. These layered insights can effectively prioritize product improvements or marketing messages.

A potential question bridging these ideas might be:

“Rank how each feature of our new line of noise-canceling headphones impacts your overall satisfaction."

- sound quality

- comfort

- battery life

- brand reputation

13. Avoiding Common Pitfalls 🚧

Even an expertly designed conjoint study can stumble if you’re not mindful of common missteps.

One pitfall is overloading the respondent with too many attributes, leading to survey fatigue and questionable data. Another is using attributes that respondents don’t actually care about, skewing the weighting of more relevant factors. Also be cautious of “filler levels” that just muddy the waters rather than reflecting real choices. Keep an eye on the survey length and complexity—nobody wants to dedicate half their day to reading eight variations of the same scenario.

A quick check-in could be a question like:

“Are the choices and attribute levels presented so far clear, or do you need more information before deciding?”

14. The Power of Visualizations 🖼️

Raw conjoint data can be dizzying, especially when you’re staring at a giant spreadsheet of utility scores. Translating these numbers into intuitive charts, heat maps, or simulators can instantly communicate the story behind the data to stakeholders. Visualizations allow for quick comparison of preference shares across different product configurations and segments.

They also make presenting trade-off analyses more persuasive, since you can highlight how each change in an attribute shifts market share. The key is to ensure your visuals are clean, straightforward, and emphasize the insights that matter most. For a gaming company analyzing new console features, a bar chart that shows how preference changes when the price is altered by $50 would be far more impactful than a raw table of regression coefficients.

15. Validate with Real-World Tests 🏝️

Conjoint offers a simulated environment of choices, but final validation often demands some real-world pilot testing or limited releases. This step helps confirm that the hypothetical trade-offs respondents claimed they’d make actually align with their real spending behaviors.

You can think of it as a final reality check—because consumer intentions don’t always match actions. If your conjoint suggests that adding a special flavor to your yogurt brand significantly boosts willingness-to-pay, a small in-store test or eCommerce pilot might be the next logical step. Sometimes, real-world constraints—like shelf space or supply chain hurdles—need to be integrated into the analysis.

An example question bridging these points might be:

“After seeing our test-market results, would you still favor launching the blueberry-lavender flavor at a higher price, or should we adjust the plan?”

16. Scaling Conjoint Globally 🌏

When you operate across multiple countries, conjoint gets more challenging but also more valuable. Cultural differences can shift the relative importance of attributes—while Americans might prioritize convenience, European consumers may focus on sustainability. Translating attributes and levels to resonate locally is key, making sure the same concept isn’t lost in different languages.

Don’t forget to adapt price points, regulation-driven features, and local brand preferences. Properly scaled conjoint can provide data-driven insights to refine product offerings region by region, enhancing global competitiveness. You've got to segment geographically for this.

Ready to Power Your Product and Brand w/ Conjoint Analysis?

Conjoint analysis may appear daunting, but these steps can turn a potentially unwieldy project into a strategic masterpiece of consumer insight. By methodically balancing attributes, levels, and design intricacies, you’ll gain a nuanced roadmap for product success that can truly transform your brand strategy.

For a basic conjoint analysis, you can get started in seconds on Pollfish. If you're not sure how to go about it, here is a detailed guide on how conjoint analysis works in our platform.

For an advanced conjoint analysis, reach out to or experts here.

Market Research Question Types - Which to Use?

Market Research Question Types - Which to Use? 📊

You always want to right tool for the job, yes?

You can't very well use a hammer to saw something in half... I mean, I guess you could just bash something in half with it, but you know what I mean - it's not going to give you a good outcome.

Similarly, you don't want to use the wrong question types for the job. You’ll never draw the right insights, even if the responses are technically accurate. By thoughtfully selecting formats that capture everything from broad strokes (leveraging single and multi selection) to nuanced shades of opinion (leveraging MaxDiff and Conjoint), you can unlock actionable insights that drive smarter decisions and bigger wins.

Here are the 16 main research question types to leverage in your surveys.

1) Single Selection 🎯

Single selection questions are the classic “pick one, any one” format, beloved by market researchers who appreciate simple, definitive answers. For instance, if gaming titan Nintendo wanted to survey Fortnite fans on which console they’re most likely to purchase next, they could pose a single selection question such as:

Q: Which console will you buy in the next 2 years?

- Nintendo Console

- PlayStation Console

- Xbox Console

Responses might yield a clear-cut distribution—say 45% Switch, 30% PlayStation, 25% Xbox—allowing Nintendo to see which console is winning the popularity contest. This question type is perfect for quick insights, but be aware that it can oversimplify complex opinions, so it’s best used when you need a decisive choice rather than a list of possibilities.

2) Multiple Selection 🌐

Multiple selection questions let respondents select more than one option, making them great for nuanced consumer behaviors. In a Zarona Cosmetics study, you might ask:

Q: Which product categories do you plan to purchase in the next month?

- Skincare

- Makeup

- Haircare

- Fragrances

- All of the Above

If they ran this question, they could discover that 60% choose Skincare and Makeup together, while only 25% go for Fragrances, revealing cross-category purchase intentions. Multiple selection helps you account for those moments when people can’t choose just one item—much like choosing only one streaming service feels next to impossible.

3) Open-Ended 💬

Open-ended questions are perfect for capturing the “why” behind consumer choices, letting respondents write freely without constraints. If Panther Foods wanted to explore consumer taste preferences for a new plant-based protein bar, they might ask:

Q: What flavors would you like to see in our next product, and why?

Potential responses (example free-text answers):

- “A spicy sriracha-lime blend for a bold kick”

- “Classic chocolate-peanut butter with low sugar”

- “Tropical mango-coconut for a refreshing twist”

Future respondents could provide elaborate feedback—maybe someone wants a sriracha-lime bar to match their adventurous palate—and you’d gather qualitative gems that no multiple-choice format could unearth. The downside? Coding and analyzing these open text answers can be as enjoyable as assembling a 2,000-piece jigsaw puzzle in the dark, so plan your analysis time wisely.

4) Numeric Open-Ended 🔢

Numeric open-ended questions capture straightforward number responses without the rigidity of preset ranges. Suppose Saiyuki Tech, a SaaS provider, wants to gauge how many hours per week enterprise software users spend in their project management tool. They could pose a numeric open-ended question like:

Q: How many hours do you estimate you’ll spend using our platform each week?

(Answer is a free numeric entry, e.g., “2,” “15,” “40,” etc.)

If they ran this question, they might discover usage estimates anywhere from a modest 2 hours to a jaw-dropping 40, arming them with the data needed to plan feature development and user support. It’s a great way to capture real-world usage figures without forcing respondents to squeeze their answer into a predefined bracket.

5) Description 📜

A description question type (sometimes called a “text display”) doesn’t require a response; it’s primarily for presenting information or context right inside the survey. Picture Nintendo again, giving detailed info about a new gaming subscription plan before asking the next question, such as:

(Displayed Text Only):

- “Our new subscription plan includes exclusive skins, advanced multiplayer features, and monthly digital currency for in-game purchases.”

They might follow up with a question afterward to gauge interest or likelihood to subscribe. This ensures respondents have the background necessary to answer upcoming questions accurately, but it also tests your skill at writing concise explanations that don’t cause eyes to glaze over.

6) Slider 📏

Slider questions are a sleek way to capture the intensity or degree of an opinion on a continuum. If 3D Printing Solutions in the Manufacturing sector wants to measure how confident potential B2B customers are in 3D-printed prototypes versus traditionally tooled prototypes, they might ask:

Q: How confident are you in the durability of 3D-printed components?

- Slider scale from 0 (Not at all confident) to 100 (Extremely confident)

Future results might cluster around the 70–80 range if the market is fairly confident but not 100% convinced yet. It’s an engaging format for participants, though analyzing 101 potential data points (0 to 100) can be more complex than a simple 5-point scale.

7) Rating Stars ⭐

Rating star questions replicate that familiar online review feel, making them visually appealing and intuitive for respondents. If Rockstar Energy wants a quick measure of satisfaction for a new flavor, they could ask:

Q: How would you rate our new Mango-Lime energy drink?

- 1 Star (Very Dissatisfied)

- 2 Stars

- 3 Stars

- 4 Stars

- 5 Stars (Very Satisfied)

If they ran this question among Red Bull fans, they might see an average rating of 4.2 out of 5 stars, suggesting that the new flavor is a hit. The star format adds a friendly, consumer-focused vibe to the survey, though it does reduce nuance to a single aggregated rating.

8) Ranking 🔝

Ranking questions let respondents order multiple items based on preference or importance. If Nintendo wants to see which game features matter most to eSports fans—like gameplay difficulty, character customization, online community, or visual quality—they could ask:

Q: Please rank the following features in order of importance (1 = Most Important, 4 = Least Important):

- Gameplay difficulty

- Character customization

- Online community

- Visual quality

The future results might reveal that 60% rank “online community” as number one, overshadowing “visual quality,” which might come as a surprise. While the ranking format is super-helpful for prioritizing product features, remember that analyzing ties or near-ties can feel like solving a Rubik’s Cube blindfolded.

9) Matrix Table 🗂️

Matrix table questions let you bundle multiple items and rating scales into a single, compact grid. Imagine Glorvon in the Fintech space evaluating multiple service attributes by asking:

Q: Please rate each of the following on a scale from “Very Poor” to “Very Good.”

- User interface

- Transaction fees

- Speed of transfers

- Customer support

If they ran this question, they could see how each element stacks up across the same scale, painting a holistic picture of service satisfaction. The matrix format is super-efficient, though it can sometimes overwhelm respondents if it looks like a giant, data-hungry bingo card.

10) Constant Sum ➕

Constant sum questions ask respondents to allocate a fixed number of points among different categories, revealing relative priorities. If Nintendo wants to see how PlayStation owners might split their gaming budget, they could pose a constant sum question like:

Q: You have 100 tokens to allocate across the following categories. How would you distribute them?

- Subscription services

- New game titles

- In-game items

- Gaming accessories

Future responses might show that 40 tokens go to new game titles, 30 to subscription services, 20 to accessories, and 10 to in-game items, revealing exactly where people’s money is likely to flow. While the data can be incredibly telling, be prepared for a slight learning curve from respondents who might wonder why they can’t just choose everything.

11) Drill Down 🕵️

The coffee origin example above is probably not something people would be able to answer, but it showcases the logic of the question well. Drill-down questions guide respondents through nested options, ensuring a more personalized response path. For instance, if Panther Foods wants to narrow down snack preferences, they might use the following structure:

Q1: Do you prefer savory or sweet snacks?

- Savory

- Sweet

Q2 (if Savory): Which do you prefer?

- Chips

- Crackers

Q2 (if Sweet): Which do you prefer?

- Cookies

- Candy

If they ran this question, they’d be able to see exactly how many participants prefer savory over sweet, and then which sub-category each group leans toward. This approach is extremely helpful for exploring hierarchical or layered product lineups, though coding skip logic can sometimes feel like you’re herding cats.

12) Net Promoter Score® (NPS) 🚀

The NPS question focuses on one thing: how likely your respondents are to recommend your brand, product, or service. If Nintendo wants to gauge brand loyalty among Nintendo Switch owners, they could ask:

Q: On a scale from 0 to 10, how likely are you to recommend Nintendo to a friend or colleague?

- 0 = Not at all likely

- 10 = Extremely likely

If they ran this question, they’d subtract the percentage of Detractors (0–6) from the percentage of Promoters (9–10), ignoring the Passives (7–8), to get the famous NPS. While NPS won’t tell you every detail about why your brand is beloved or hated, it’s a great snapshot of brand advocacy—just don’t forget to pair it with open-ended follow-ups for context.

13) A/B Test ⚖️

A/B tests compare two options—like two ad creatives or two product labels—to see which resonates more. For example, Saiyuki Tech could show a group of enterprise software users two different web app dashboards, each with unique designs or feature sets:

Q: Which web app dashboard do you prefer?

- Dashboard A (Minimalist layout)

- Dashboard B (Robust sidebar)

If they ran this test, they might find that 65% prefer Dashboard A for its minimalist layout, while 35% stick with Dashboard B. This method is data-driven, straightforward, and perfect for incremental improvements—though sometimes you’ll learn that both versions are equally disliked (a humbling experience indeed).

14) Conjoint 🔀

Conjoint analysis helps you figure out which combination of features or attributes your market values most. If Nintendo wants to figure out the perfect bundle for a new “eSports subscription,” it could vary price, exclusive skins, early access to games, and monthly in-game currency in different hypothetical packages, for example:

Q: Which subscription package would you be most likely to purchase?

- Package A: Mid-range price, Exclusive skins, No in-game currency

- Package B: High price, Early access to games, Monthly in-game currency

- Package C: Low price, Fewer exclusive skins, Occasional bonus items

By analyzing which package is chosen most often, Nintendo could learn that 70% of Fortnite fans prefer a mid-tier price with exclusive skins and minimal in-game currency. Conjoint analysis is incredibly revealing but can get complicated fast—like trying to juggle flaming torches while riding a unicycle—so it’s best to be methodical in your design.

15) MaxDiff 🆚

MaxDiff stands for Maximum Difference Scaling, and it’s used to identify the most and least important attributes from a set. Panther Foods might use MaxDiff to test packaging elements by asking:

Q: For the attributes listed below, choose which one is MOST important to you and which one is LEAST important to you.

- Color scheme

- Product claims

- Brand logo size

- Tagline

If they ran this question, respondents might consistently pick “brand logo size” as least important, while “product claims” stands out as the top priority. This method is excellent for pinpointing relative preferences, though interpreting the results might take a bit more brainwork than a simple rating scale.

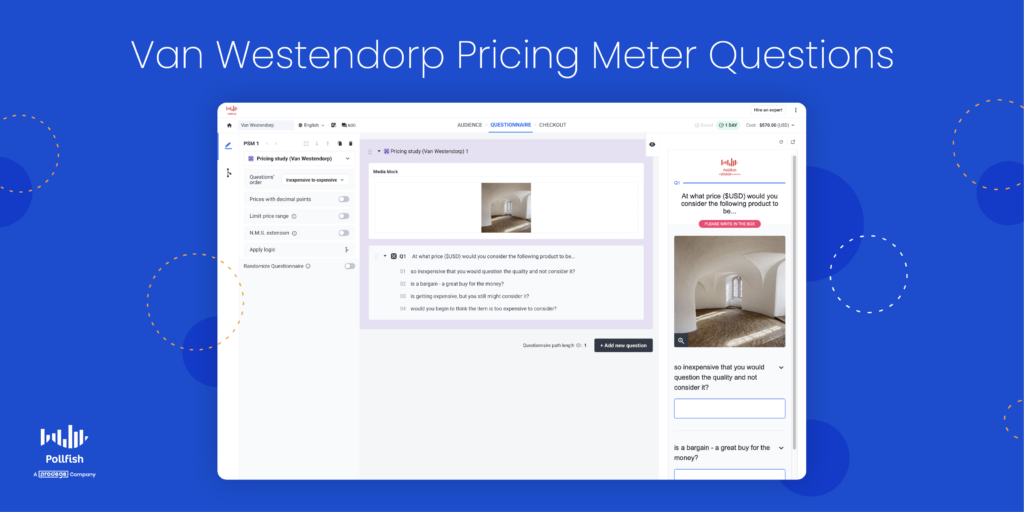

16) Pricing Study (Van Westendorp) 💲

The Van Westendorp Price Sensitivity Meter is a classic approach to determine consumers’ price thresholds: too cheap, cheap, expensive, and too expensive. If Nintendo was launching a new premium controller, they could ask eSports fans something like:

Q: At what price point would you consider this controller to be:

- Too cheap?

- Cheap?

- Expensive?

- Too expensive?

If they ran this question, they’d find a range—perhaps $40 to $60—where most potential buyers consider the controller acceptable, helping them pinpoint an optimal price. Though it won’t address competitor pricing or brand perception directly, it gives a straightforward sense of where your price might be too low or too high.

With these question types in your research arsenal, you’re equipped to capture feedback in ways that are both diverse and data-rich. Whether you’re working with a major player like Nintendo or a niche brand exploring emerging markets, customizing your question format is the key to deeper insights.

When you’re ready to apply these methods for real, remember that the right survey platform or research partner can elevate your outcomes significantly.

15 Steps to Pick The Best Marketing Agency

15 Steps to Pick The Best Marketing Agency 💥

The best marketing agency will accelerate your brand’s momentum, the wrong one will just drain your resources with little to show in return.

I've got a unique perspective on marketing agencies as I have hired many agencies for various organizations over the last 13 years and I've even worked at a few myself.

Below is your 15-step roadmap to identify and partner with an agency that will elevate your brand and deliver quantifiable ROI.

1. Prioritize KPIs Over Vanity Metrics 🔠

A results-focused agency zeroes in on the metrics that genuinely drive profit, not just surface-level engagement.

In direct response terms, “likes” and “shares” don’t pay the bills - conversion rates, CAC (Customer Acquisition Cost), and CLTV (Customer Lifetime Value) do. Of course, if your goal is brand awareness, and your product is not digital, this picture looks quite different.

A good branding agency will still have tangible KPIs, they're just different things like awareness, purchase intent and often measured in terms of exposure and no-exposure groups to measure the lift in sentiment over time from campaigns using brand trackers.

2. Get Evidence of Similar Success 📈

Proven experience with you target audience is non-negotiable.

If you’re in the fitness industry and launching a premium gym like "Pulse Fitness," the agency should have robust case studies of similar campaigns - complete with the strategies used, channels leveraged, and the KPIs they improved.

That's not to say that a quality agency can't learn your industry, but they might be cutting their teeth on you as a client.

Sometimes this is okay, for instance, if you're hiring them for a more specialized service, it might be uncommon for a marketing agency to have both the criteria at the intersection of a very specific specialty AND your target audience. Just be aware of it.

Past performance in analogous scenarios is the best gauge for their potential success with your brand.

3. Assess Partnership Mentality 👭

You need a strategic ally, not just a vendor.

That's done at the contract-level and the human-level.

For instance, an agency running ads for 20k monthly regardless of ad spend or performance will lose interest unless you're planning to quit on them. That means stagnant results for you. You want an agency that co-create ideas and pivot strategies for mutual growth.

So, it's best to tie to performance, so you win together and lose together. Ideally, you really want to align pricing and incentives with growth goals. That way, if you're holding up your end, they will see the value in continuing to invest effort into you as their client, and not just become another drop in the bucket.

The only risk here is if your product is no good, in which case, you've got bigger problems anyway!

4. Validate Their Depth of Market Research Expertise 🔬

Well BEFORE any campaign is thought up, or any pen is put to paper (or finger is put to keyword, I suppose), your agency should be doing their research. It’s not just about gathering data willy nilly.

It means tangible research objectives, credible research sources, quality research design, good research processes, robust data analysis and so on.

Imagine a company, "TrendSpot Solutions," attempting to gather consumer insights for a new product launch. In their research, they read some relevant competitor websites, and then deploy a 5-question survey with no clear objective beyond "understanding consumer preferences." If you were to ask them about anything regarding market research principles from this university, they'd have no reply.

They use a convenience sample of just 100 respondents sourced from social media ads with no screening criteria, resulting in a mix of irrelevant participants (e.g., teenagers answering a survey about retirement planning).

They fail to implement quotas, leading to overrepresentation of certain demographics (e.g., 75% female respondents when their target audience is evenly split by gender).

The survey questions are poorly worded, with leading questions like, “Don’t you love eco-friendly packaging?” and double-barreled questions such as, “How satisfied are you with the price and durability?”

Worse yet, they don’t validate any participant behaviors—respondents claim to buy organic food weekly, but there’s no effort to verify this behavior through screening or follow-up questions.

That is literally 7 wrong things stacked on top of each other. This like trying to extract quality data from a waffle. Doesn't work. Yet, some of the "best marketing agencies" do this!

Contrast this with high-quality survey research conducted by a more sophisticated marketing agency on behalf of another company, let's call them "InsightEdge." They begin with a clear research objective: to determine key purchase drivers for their eco-friendly product among sustainability-focused millennials.

They target a robust sample size of 1,500 respondents, ensuring statistical significance and the ability to segment results. They implement nested quotas in sub-buckets, ensuring proportional representation across gender, age groups, income levels, and geographic regions.

Respondents are carefully screened using validated behaviors (as shown below in the Pollfish platform), such as having purchased an eco-friendly product in the past three months.

The survey will also be meticulously designed, avoiding leading or ambiguous questions, with a mix of closed-ended questions (e.g., “On a scale of 1 to 5, how important is biodegradable packaging to you?”) and open-ended ones to allow nuanced responses.

The survey will also be meticulously designed, avoiding leading or ambiguous questions, with a mix of closed-ended questions (e.g., “On a scale of 1 to 5, how important is biodegradable packaging to you?”) and open-ended ones to allow nuanced responses.

Skip logic ensures that irrelevant questions are avoided based on earlier responses, improving the user experience. The result is a dataset rich in actionable insights, enabling InsightEdge to tailor marketing strategies, pricing, and product features to real consumer behaviors and preferences, giving them a competitive advantage in the market.

Now your marketing agency has a legitimate foundation for your campaigns.

5. Look for Strong Data-Driven Campaigns 🔟

Let's look at an imaginary brand “TimeLux” luxury watches: A good agency will not spam Facebook with ads, but use advanced retargeting strategies may target high-income professionals who browsed competitor websites but did not convert.

The creative can highlight superior craftsmanship or an exclusive brand heritage that resonates with their aspirations. That’s how you turn clicks into conversions.

Just look at Nike’s approach to personalized ads using data from its NikePlus membership platform. They retarget users with relevant offers (like exclusive releases or early access to limited-edition merchandise), using analytics to drive urgency and emotional resonance.

Top agencies will create campaigns seamlessly blend compelling creative hooks with rigorous data analytics. In practical terms, this means your agency should be fluent in A/B testing, audience segmentation, and dynamic creative optimization (DCO).

They will use platforms like Google Analytics 4, Mixpanel, or PowerBI to not only track clicks but also measure funnel drop-off rates, lifetime value (LTV), and customer acquisition costs (CAC). The ability to pivot swiftly based on these insights is critical for high-ROI campaigns.

6. Gauge Their Channel Expertise—Including Niche Platforms 🌐

A true marketing partner will master both mainstream platforms (Facebook, Google, Instagram) and specialized, sometimes smaller, but highly potent channels. This can involve industry-specific forums, niche social networks, or platforms where your target audience heavily congregates.

Let's do another hypothetical example, “PageTurner Press” a publishing company.

The agency might propose:

- Amazon Author Central optimization

- Targeted campaigns on Goodreads

- Influencer promotions on TikTok (especially BookTok communities)

- Local book tour in the author’s hometown.

Each channel addresses a different facet of the readership journey.

Sephora’s success with beauty micro-influencers on YouTube and Instagram has been well-documented. They also run specialized campaigns on platforms like Pinterest, focusing on shoppable pins and user-generated content.

Evaluate whether the agency leverages advanced targeting or brand partnership tools. For instance, using TikTok’s “Creator Marketplace” can help you quickly spot relevant influencers for a niche. Agencies that excel here can dramatically set you apart from competitors who stick solely to mass-market channels.

7. Evaluate Their Copywriting Prowess 🖊

I’ve literally read 25+ books on copywriting, 3,967 tips online, so this is a fun one for me. Powerful copy lights a fire in your audience’s mind. It can transform lukewarm curiosity into genuine belief—and eventually, a sale.

Here are my original copywriting tips:

To put it more simply, assess their ability to grab attention, leave a mark, and spark immediate action.

8. Assess Their Design Capabilities 🎨

Stunning visuals can be the difference between a fleeting glance and a lasting impression.

A capable agency weaves design seamlessly into your entire marketing funnel—from ad creatives that halt the scroll to landing pages that guide the user’s eye.

They should be crafting amazing creatives, one's that highlight the pain points and value in an amazing light, and look nothing like the generic image in the header of this post that I used because our designer Andrea is working on more important product-related items right now.

Look at their work - maybe they helped refresh an old, janky user interface or created consistent brand visuals for a top-tier apparel line, or designed something that is way cooler than anything they had before.

Cohesive design not only elevates brand perception but can also boost click-through and conversion rates. A design-savvy partner is worth its weight in gold.

9. Check Their Technical Integration Expertise 🤖

A top agency needs more than just creative flair; they should also excel at implementing and integrating the various platforms that power modern marketing.

This includes syncing data from CRMs like Salesforce, marketing automation systems like Marketo, or e-commerce platforms like Shopify. Let’s say you’re “UrbanLeather Co.” selling custom wallets and bags: your agency should seamlessly connect your email automation, retargeting pixels, and inventory management so your marketing flows like a well-oiled machine like so:

10. Investigate Their Quality Control Processes 🎪

Typos in an ad or broken links in a sales funnel can devastate your credibility. Before signing on, poke around their QA systems. Do they have a multi-step review process? How often do they audit ads, landing pages, and email flows? For example, if you’re rolling out a new “buy one, get one” offer for “FreshThreads Apparel,” they should verify promotional codes, test checkout flows, and ensure that every email or ad references the correct details. Quality assurance is about protecting both your brand and your bottom line.

11. Demand Robust Attribution Models 🔄

Attribution is more than just a buzzword—it’s your key to understanding exactly which channels or touchpoints drive revenue. Comprehensive models (e.g., multi-touch or data-driven) help you decide whether your influencer campaign on Instagram or your remarketing ads on LinkedIn are delivering the best bang for your buck. If you’re launching a premium tech product like “QuantumWork Laptops,” you need to track each buyer’s journey—often involving multiple site visits, video demos, email nurture sequences, and possibly even offline store visits. The right attribution model shows you precisely where to double down.

12. Ensure Their Reporting is Transparent and Actionable 🗞

Dashboards and reports should empower you to make data-backed decisions, not drown you in complexity. Ask how often you’ll receive performance updates and what KPIs they plan to highlight. If you’re “SolarVista Energy,” for instance, do they break down cost per acquisition by zip code? Can they show you lead quality over time? Transparency is non-negotiable. The best agencies will proactively share insights, recommend tweaks, and offer immediate rationale when performance dips or spikes.

13. Evaluate Their Commitment to Self-Improvement 🔄

The digital landscape evolves at breakneck speed, and complacent agencies get left behind. Gauge whether their team actively pursues certifications (like Google Ads, Meta Blueprint), attends industry conferences (e.g., SXSW, AdWeek), or contributes thought leadership pieces to major publications. If you’re working with a brand that’s pushing the envelope—say, an AI-driven fashion platform called “AutoStyle AI”—you want an agency that’s just as enthusiastic about innovation. Their hunger for knowledge and adaptation is your secret weapon in a crowded market.

14. Confirm 24/7 Support and Problem Management Skills 🛑

In the era of social media, brand crises can erupt at lightning speed—a misinterpreted tweet, a product recall, or an influencer scandal. Ensure your agency has a well-documented crisis plan and a genuine ability to offer round-the-clock support. Picture yourself as “AquaPure Beverages,” discovering a contamination scare late on a Friday night. Your agency should be equipped to jump in with immediate updates across channels, coordinate with PR teams, and keep customers in the loop until the issue is resolved. That’s real crisis management.

15. Align Pricing and Incentives With Growth Goals 💳

The best partnerships thrive when incentives match. It might mean a performance-based structure, a hybrid retainer, or revenue sharing once you hit certain KPIs. For “NovaTrack Running Shoes,” if sales skyrocket after a successful digital push, the agency should share in the windfall—and, conversely, be motivated to troubleshoot quickly when conversions lag. Negotiating a pricing model that reflects genuine collaboration can forge a long-lasting, mutually beneficial relationship.

Bottom Line

A great agency is more than a vendor—it’s a strategic extension of your own team, driven by data, committed to continuous innovation, and poised to deliver results under any circumstance. By vetting every aspect—from technical integration prowess to crisis management protocols—you’ll partner with an agency ready to help your brand flourish in a fast-evolving marketing world.

Platforms like Pollfish can enhance you or your agency’s consumer insights, fueling the data they need to craft effective, conversion-oriented campaigns. With the right agency and insights on your side, you’re positioned to outsmart and outmarket the competition.

Now, go confidently and secure that agency partnership designed to catapult your brand to the next level.

17 Steps to Turn Open-Ends into Gold with Qualitative Coding

17 Steps to Turn Open-Ends into Gold with Qualitative Coding 🎨

I LOVE really thorough and nuanced open-ended survey responses. There's nothing like the pure, raw, words of your target market telling you, in their very own words, exactly what is good, bad, right, or wrong about your product.

But if I have, let's say... 2,000 open-ended responses to read through... well, now I'm in a pickle.

Not only would that take forever to read, but then I'll also become subject to various biases as I try to extract insights, things like recency bias, confirmation bias, availability heuristic and so on.

That's where qualitative coding comes in! In survey research, coding refers to the process of analyzing qualitative data—such as open-text responses to questions—and categorizing them into structured, quantitative formats. This is done to make the data easier to interpret, analyze, and act on. Here’s how it typically works:

Respondents may provide detailed text answers to questions like "What do you like most about this product?" Then, these text responses are reviewed and assigned to predefined categories or themes (e.g., "quality," "price," "design"). If themes aren't predefined, they may be created during the coding process based on patterns observed in the responses. Finally, the results of the coding process are quantified without manually sifting through large volumes of text.

While I'm on the topic, I should mention that Pollfish is building out some AI capabilities that help improve this process, nevertheless, best practices for qualitative coding will remain critical. So, let's jump into our 17 expert-level tips.

1. Define Your Codebook with Precision ✍

Your codebook is the Rosetta Stone of your analysis. It needs clarity, consistency, and relevance. Suppose you're working for Celsius Energy Drinks. You've conducted interviews with their audiences: RedBull drinkers and fitness enthusiasts. Create a code for each recurring theme, like "Energy Boost" or "Taste Preference." If 73% of respondents fall into a bucket called "too sweet," it’s time to refine those flavor profiles.

Here's an example of codes for a coffee startup trying to distinguish itself from packaged bean roasts like Peet's and Starbucks.

2. Distinguish Literal from Interpretive Answers ⚖️

At the onset of qualitative coding, it’s vital to separate literal responses (“I prefer more fruity flavors”) from interpretive sentiments (“It tastes like a tropical vacation in a can, but it’s too sweet”). This helps ensure you capture direct references (like brand names) alongside deeper, more emotional undercurrents of feedback. For example, if Nike tested a new sports drink line targeting “Fans of Gatorade, BodyArmor, and Powerade,” open-ends on “favorite sports drink traits” might literally mention “freshness” 47% of the time but interpretive sub-themes such as “reminds me of fun training days” 21%. By splitting these two types of statements, your coding structure gains both concreteness and deeper insight, setting the stage for a thorough analysis.

3. Test on a Pilot Group First 🧪

Rather than diving head-first into coding all open-ends from thousands of respondents, pilot your approach with a smaller sample. For instance, if Nestlé ran a short open-end question on “What’s your ideal coffee experience?” among just 50 coffee enthusiasts, you might find that 60% referred to “bold flavor,” while 40% specifically mentioned “low sugar content,” giving an initial direction on potential codes. This pilot run would highlight potential pitfalls (like repeated mention of “cheap pricing” in 15% of answers) and help refine the codebook for the larger study.

4. Embrace Thematic Bins for Coding 📂

Start with broad buckets—like taste, brand association, or packaging—before zooming in on more granular codes. This helps you keep track of major categories in open-ends, such as “memorable marketing campaigns” or “bad aftertaste.” If Starbucks ran a study, they might create top-level bins like “Flavor Innovations,” “Brand Design,” and “Competitive Mentions,” then break them down further (e.g., “tropical,” “spicy,” “eco-friendly packaging”). The result: a structured system that grows in detail without turning into a labyrinth of unmanageable codes.

5. Consider Cross-Category Overlaps 🔎

In the real world, an open-end response often spans multiple themes. “I love the new lime flavor, but the branding is way too flashy” touches on both taste and design. A single mention of “too sweet” can slide into health concerns and negative brand perception if respondents elaborate about sugar intake. If Pepsi found that 30% of open-ends discussing “too sweet” also tied it to health anxieties, it would enrich their insights and guide more precise product or messaging tweaks.

Here's what an overlap of codes might look like:

6. Use a Hybrid Approach of Manual & Automated Tools 🤖

While AI-driven text analysis can spot recurring words like “refreshing” or “fake sugar” in a split second, human coders bring the nuance needed to interpret sarcasm, humor, or cultural references. Combining technology with a human touch ensures both efficiency and accuracy. If Coca-Cola leveraged an automated text analytics tool to scan 1,000 open-ends about a new “Fiery Citrus” flavor concept, it might reveal 38% describe it as “intriguingly spicy.” However, manual coding could further reveal that 12% use language implying confusion with hot sauce—something an algorithm alone might not pick up.

7. Rely on Keyword Frequency but Don’t Stop There 🔢

Seeing that “mango” (or “citrus”) gets mentioned 45% of the time is helpful, but it doesn’t tell you if people actually liked it. Qualitative coding means probing deeper: is the context positive, negative, or neutral? For instance, 20% of those flavor references could be complaints about it tasting too artificial. If Target introduced a “Spicy Mango” flavor concept and found “mango” repeated often, they’d still need to decode whether it’s a beloved highlight or a disliked gimmick. Balancing frequency data with context-based codes prevents misreading simple word counts as unequivocal endorsement.

8. Explore Sentiment Beyond Positive/Negative 🤷

Everyone likes to boil down sentiment to a neat “people loved it” vs. “they hated it.” Real-world feedback is rarely that tidy, often falling into categories like “cautious optimism,” “mild disappointment,” or “confusion.” If Monster Energy tested an open-ended question—“What emotions come to mind when you see our upcoming Spicy Lime can design?”—they might find 47% responding with “intrigue” or “curiosity,” while 15% say they feel “overwhelmed” by the bright color palette. Accounting for these nuanced sentiments provides a more layered perspective on how people actually feel.

For instance, prior to the 2024 election, I ran a survey on Trump vs. Kamala, primarily trying to isolate the key swing issues that either party could have leveraged to sway the vote in their particular direction.

I also had an open-end question asking "why are you voting for the candidate you're voting for?" Of course, these open ends are of greater value when first segmented into the verified behavioral subgroups (Registered Democrats, Republicans & Independents) that I easily could target within the Pollfish platform. All this to say, if you looked at these with a simple positive/negative lense, you'd miss out on all of the critical insights hiding in the nuanced language of the respondents.

As an interesting real-world example - "two evils" came up a ton in my survey, indicating a fair amount of dislike for both parties' candidates, but the need to support the one they figured was less bad for the country.

By the way, you can access this survey's results here.

9. Incorporate Emotional Lexicons 🧠

Emotional lexicons, which map keywords to specific feelings like excitement, nostalgia, or annoyance, can give your codes more texture. For instance, “craving that tang” might imply positive anticipation, whereas “reminds me of cough syrup” is a negative association cloaked in nostalgia or medical undertones. If Red Bull discovered that 27% of respondents mention it “smells like childhood candy,” that’s a distinct emotional anchor labeled as “sweet nostalgia.” Doing so helps quantify intangible reactions and articulate them more convincingly to stakeholders.

10. Use Iterative Refinement of Codes 🎯

Nobody gets their codebook perfect on the first try—especially when participants mention off-the-wall responses like “it tastes like a flaming rubber duck.” Start with best-guess categories, then refine them as new themes emerge during analysis. If Kellogg’s discovered a recurring mention of “mid-day pick-me-up” (19% of open-ends) that didn’t fit neatly into existing bins, they might add a new code under “functional benefits.” An iterative approach ensures your coding framework evolves alongside real human language and creativity.

11. Build Intercoder Reliability 🏆

Having more than one person code the same open-ended responses can feel like watching two chefs argue over salt, but it’s crucial for consistency. You want to ensure that what one coder labels “fruity fragrance” isn’t translated by another coder as “flowery aroma.” If General Mills had multiple coders working on the same dataset, they’d likely measure intercoder agreement—often with something like Cohen’s kappa—to confirm that their categories and definitions are robust and replicable. The better your reliability, the more confidently you can communicate findings to the higher-ups (who usually just want the bullet points anyway).

12. Visualize Trends for Stakeholders 📊

A giant spreadsheet of coded data can be about as exciting as reading the phone book, so consider turning your codes into graphs or infographics. If Kraft Heinz noticed that 44% of respondents bemoan a “lack of carbonation,” they could show it in a pie chart or bar chart for immediate clarity. The same goes for trending new flavors: if 35% highlight “tangerine” as the next big thing, that’s prime real estate on a simple visualization. Visual cues not only streamline strategic decision-making but also help internal teams and external clients see patterns at a glance.

13. Weave in Real-Life Context 🌐

A response like “I’d totally buy this if I saw it while gaming” reveals lifestyle or situational context worth coding. If Danone realized that 23% of their yogurt consumers are primarily snacking “during gaming sessions,” they’d uncover an untapped promotional angle. Layering context codes—like “gaming environment,” “social gatherings,” or “on-the-go”—helps paint a complete picture of when, where, and why consumers engage with a product. It’s the difference between marketing a “great-tasting snack” and a “fuel for your epic gaming marathon.”

14. Tracking Changes Over Time 🕰️

Qualitative coding isn’t a one-and-done scenario if you plan to iterate your product or brand. If Hershey ran monthly open-ends to see how a new chocolate bar formula is received, they might find that in the first month, 40% of comments focus on “lack of creaminess,” but by the third month—after tweaking the recipe—only 8% mention it. Monitoring how themes rise or fall month-over-month can identify if changes are resonating or if a brand-new wave of complaints is brewing.

15. Merge Qualitative with Quantitative Indications ⚖️

Survey data often mixes open-ends with closed questions like rating scales or MaxDiff. For instance, if Unilever introduced a new “Tropical Chill” flavor and 65% rate it a 4 or 5 on a 5-point scale, while 35% rate it 3 or below, overlaying that with open-end codes clarifies why certain people adore it (“tastes exotic,” 22%) and why others are less keen (“too reminiscent of chili sauce,” 13%). This synergy between coded text and numeric metrics gives stakeholders a fuller, more actionable story.

16. Turn Insights into a Next-Level Storytelling Device 📜

Once you’ve coded all the open-ends, tie it back to real business actions and strategy. If Mars were to code thousands of responses, they might show how a good chunk (38%) of people prefer “lighter carbonation,” leading to potential R&D changes, or how 26% ask for a “healthier energy blend,” guiding a marketing pivot. As Troy Harrington of Pollfish puts it, “Qualitative coding unlocks the ‘why’ behind the ‘what,’ equipping brands with narrative depth that resonates.” Include additional capabilities, like video highlight reels, to let stakeholders experience actual respondent quotes or a montage of consumer emotions—nothing sells a story like seeing it firsthand.

17. Know When to Stop Coding—Don’t Overthink It 🔒

Sometimes, enough is enough. If Dunkin' is analyzing "morning routine habits," and themes stabilize at "coffee," "breakfast," and "time-saving," stop there. Coding forever doesn’t lead to better insights.

Qualitative coding is both an art and a science, offering unparalleled depth to market research when approached with care. If you don't anticipate tracking drivers or running surveys to samples large enough to justify a dedicated qualitative coding effort, then Pollfish is perfect for you. For busy professionals who have the resources, leveraging the full coding services that Prodege offers can help the journey from raw data to actionable insight become less daunting and more empowering.

Top 25 Competitor Market Research Strategies

Top 25 Competitor Market Research Strategies 🕵️♂️

Everyone thinks they've got the best product...

Truth is, we all have competitor's selling similar offerings, and you constantly see value propositions like "highest-quality!" or "truly the best service", but these are not really differentiating, right? In fact, there is an argument to be made that most brands are not differentiating with their messaging, they are commoditizing.

To TRULY stand out, you'll want to deeply know your competitors by doing serious competitive market research.

This can be done most effectively by targeting your competitors' user's in a Pollfish survey. In addition to that, here are 25 ways to study your competition.

1. Decode Your Competitor’s Target Audience 🔍

Let's say you're launching a beach-style clothing brand, and you're wanting to understand your competitors customers. You can create a survey with a multi-select question towards the front and screen for those who have recently worn Hurley, Volcom, Quicksilver, etc. After that, ask whatever you want to know about them to better serve that audience!

Or, let's say you're Netflix, and you want understand perceptions of your brand vs. theirs. You could have a question like this:

Funny enough, you can actually skip these questions in Pollfish by just selecting these streaming behaviors of users directly from the list of 7,000 validated brand interactions we track with our consumer panel.

2. Analyze Their Messaging 🌌

It's ALL about the UVP. Competitors’ brand messaging reveals their unique value propositions (UVPs). Take a cue from the beverage giant Monster Energy.

If Monster targets audiences interested in “competitive eSports gamers,” their slogan, social ads, and promotions likely emphasize high-energy performance. By comparing survey responses, like “Which energy drink do you trust for gaming?”—with 55% choosing Monster and 20% choosing Red Bull—you can refine your messaging to tap into this segment.

3. Competitor Content Audit 🕁

Content is a big channel for many brands, which means you can find what they're doing to attract new business, and replicate it, but even better! Conduct a detailed audit of your competitors’ blogs, videos, and social posts.

Suppose you’re in SaaS, competing with an HR tech giant like BambooHR. Break their content into themes, e.g., “employee wellness” vs. “hiring best practices.” A survey asking HR professionals, “Which content helps you the most in your role?” reveals that 60% prefer BambooHR’s wellness focus. Use that intel to create better, more tailored content.

4. Study Ad Placements 🗽

This is tricky to do in "the wild" of the internet, because targeting is so complex and based on all sorts of algorithms. So the best way to do this is... with a survey! Advertising reveals a lot about your competitors’ strategies. For example, the fitness equipment industry leader Peloton might target audiences like “home gym enthusiasts” or “first-time exercisers.” Your survey could ask respondents, “Where have you seen Peloton ads?”—42% may mention YouTube. Now, you know where to direct your ad spend to compete.

5. Track Pricing Models 💸

Don't know how to price? Nothing beats a Van Westendorp survey for that.

But, if you're not ready for that, let's consider an example. For instance, if your competitor is the well known e-commerce tool Shopify (below), and this is there pricing, your prospects will already have a tendency to bucket price values relative to them, and you'll need to distinguish your offerings to deviate from the expected pricing.

Let's consider another example, imagine competing with a subscription box service like Stitch Fix in the retail space. Use conjoint analysis to survey options like “Would you prefer a $20 styling fee with free returns or a $40 flat fee with discounts?” Analyzing responses might reveal that 65% prefer their model—a clue for your next move.

6. Deconstruct Product Features 🎡

Yes, yes, sell the benefits, not the features. But differentiated benefits actually stem from unique features. Survey your audience to determine what features resonate most with competitor offerings. For instance, if a competitor offers “customizable dashboards” for their project management software, ask users to rate its importance on a scale of 1-5. Results such as 70% choosing 5 (very important) show where you may need to prioritize development.

I like to use matrix questions to get a rank on a unique scale on multiple features at once. Here's what a matrix question looks like in the Pollfish platform results page.

This example was about which aspect of AI which be most useful in support to office workers job duties. This is something an AI vendor could use to determine where to focus their features, towards text, audio, images, voice, etc! In this case, text ranked very strong with 113/400 choosing it as the #1 option, that's useful data!

On the flip side, robotics ranked lowest at 159/400 as #5 (which makes sense because these are office workers).

See this full survey in Pollfish here!

7. Observe Industry Buzzwords 🧠

What's the "very demure" of your industry? Competitors often latch onto trendy industry language. For example, in the sustainable beauty industry, Lush might emphasize “cruelty-free” and “zero-waste.” Create a survey asking, “Which words influence your purchasing decision most?” If 40% choose “zero-waste,” it’s a sign to adapt your messaging.

8. Monitor Social Media Engagement 📲

Social isn't representative like a survey built on a quality Prodege audience, but can still help you gleam great insights. Dive into competitors’ social media interactions. Let’s say you’re tracking Dunkin’ in the quick-service restaurant industry. If their campaign targeting “coffee lovers” gets 10,000 shares and 80% positive sentiment, consider mimicking their style in your campaigns.

9. Evaluate Customer Service 🧳

Competitor customer service can be their Achilles’ heel. If Zappos excels with live chat—achieving 90% satisfaction rates—survey your respondents on preferred service channels. Insights like 50% preferring live chat can inform your strategy.

10. Examine Competitive Differentiators 🔄

Is your market commoditized? Probably. What sets your competitors apart aside from market share? Get that answer and you'll find the gaps you can fill.

A snack brand like KIND might win loyalty with “wholesome ingredients.” Ask audiences, “Which of the following makes you loyal to a snack brand?” If 65% cite ingredient transparency, your next campaign has a clear direction.

11. Conduct Benchmark Studies 🎮

Benchmarks are great, and Prodege has trackers internally across many industries to help you here. A benchmark study measures where your competitors stand in key metrics.

Survey an audience segment like “technology enthusiasts,” asking, “Which smart home brand do you associate with innovation?” Results such as 55% choosing Google Nest over Amazon Echo show where you’re lagging.

12. Dissect Brand Loyalty Programs 🏆

Competitors’ loyalty programs often hide insights. Starbucks’ Rewards program attracts a whopping 28 million members. Run a survey asking, “Which loyalty feature matters most?” If 45% choose free mini-samples of any coffee, integrate that into your next iteration.

13. Spot Seasonal Trends 🍁

Competitors often time their offerings around trends. For instance, bike sales peak just before summer. Survey your audience with questions like, “Which month are you most likely to buy a new bike?”— 70% may say June, informing your seasonal strategy.

14. Reverse Engineer Product Launches 🎨

Analyze how competitors test concepts and rolls out new products. If Tesla promotes a new car via exclusive preorders, survey car buyers about “What excites you about product launches?”—responses like 55% choosing “exclusive access” help refine your rollout strategy.

15. Monitor Influencer Collaborations 📹

Competitors partnering with influencers can reveal targeting strategies. For example, Adidas might collaborate with niche “yoga influencers” to sell a new line.

Ask your audience, “Which influencers inspire your purchasing decisions?”—30% may mention specific yogis—a signal to emulate.

16. Investigate Product Reviews 🌍

Competitor reviews expose customer likes and dislikes. For instance, here's a review of one of our competitors (that happened to be written on our own site).

Seeing these reviews help us get an idea of why and even how many users may defect from certain competitors. In this case our support team and easy pricing won this user over. You can find the same insights on your product in your market!

17. Track Market Share 🔄

In business, winner takes all. If you're not in the share, or well-niched, you're fighting for market share crumbs. Understanding market distribution clarifies competitor dominance of market share. And that informs your next steps.

If you're a ride-share service for instance, ask, “Which ride-share app do you use most?” If Uber captures 70% of users, your market entry will require niche positioning.

18. Investigate Strategic Partnerships 💼

Competitor partnerships often align with growth objectives. If Airbnb partners with local tourism boards, survey travelers with, “Which platform improves your local experiences?” If 60% choose Airbnb, it’s time to evaluate similar collaborations.

19. Study Their Website UX 🌐

Competitors’ websites hold valuable UX insights. This is harder to target with a survey, but you can still find areas for improvement just by looking. Then, survey your own users with, “How would you rate your online shopping experience with X?”—Only 10% saying “easy-to-navigate” highlights where you might be falling short.

20. Evaluate Competitor Promotions 🎉

Promotions reveal how competitors drive demand. For example, Subway’s “$5 Footlong” campaign redefined value dining. However they recently switched that to a buy two deal...

Anyways, survey respondents with, “What deal would be too good to refuse?”.

21. Measure Brand Advocacy 🎗

NPS scores are a great way to benchmark how people think you compare to your competition. Competitors’ advocacy levels can be measured via Net Promoter Scores (NPS).

Ask, “How likely are you to recommend X to a friend?” If their score dwarfs yours, it’s time for some serious brand building.

22. Learn From Product Failures ❌

There's nothing like a great product flop. Competitor flops are your treasure troves... but ONLY if you actually ask what happened! You've got to get the "why". Ask, “Why didn’t you buy X’s product?” If 80% say “the new colors are awful,” you know what to avoid.

23. Assess Sustainability Efforts 🌿

Sustainability only applies in certain industries, but it is super important to some folks. In industries like fashion, brands like Patagonia win by promoting sustainability. Ask audiences, “How much do eco-friendly practices influence your decision?”—67% prioritizing this will steer your corporate strategy.

24. Analyze Global vs. Local Strategies 🌍

People in Surf City, USA and in Taipei, Taiwan are not always going to have the same consumer habits. Global competitors often tailor local strategies.

For instance, Coca-Cola’s regional flavors dominate. Survey consumers with, “Do you prefer global brands offering local options?” Insights guide your localization efforts.

25. Test Customer Acquisition Strategies 💳

Analyze how competitors draw in customers. For example, Netflix’s free trial strategy revolutionized streaming. Survey new users with, “What prompted you to try X?” If 60% cite "something the say on reddit", adapt accordingly.

Closing Thoughts: Understanding your competitors isn’t just about knowing what they’re doing—it’s about knowing what you should be doing better.

22 Ways to Do Secondary Market Research

22 Ways to Do Secondary Market Research ✨

Secondary market research is perfect for exploring the lay of the land—like scoping out market trends or uncovering what’s already out there before drafting your own survey. Let’s dive into 22 expert tips that will have you mining data gems with the precision of a seasoned market researcher. Grab your caffeine—we’re skipping the fluff and going straight to the good stuff.

1. Understand the Data’s Origin Story 📺

Every data point has a backstory. Consider a company like Peet’s Coffee, analyzing the Beverages industry. Let’s say they’re targeting audiences who frequent Starbucks and Dunkin'. The goal is to uncover trends for launching a new seasonal blend. Secondary data from sources like Statista or Euromonitor might reveal that 43% of coffee drinkers prefer holiday-themed beverages. Always trace your data back to its source, checking for potential biases or outdated information.

2. Cross-Reference Multiple Sources ⚓

Never trust a single source. Imagine Under Armour entering the Apparel industry’s competitive research scene. They’re analyzing behavioral data on “outdoor running enthusiasts” to assess if launching high-performance trail shoes makes sense. If one report claims 55% of runners prefer trails, validate that number with at least two other datasets. Your secondary research should resemble a crime investigation—multiple witnesses for every claim.

3. Prioritize Recency Over Reputation ⌛

The data’s credibility matters, but so does its age. Take Spotify, looking into the Media industry while targeting “Gen Z audiophiles.” Their question type: “Which genre do you listen to most?” Response: 41% say lo-fi beats. Even reputable sources like Gartner need a recency check. Trends change faster than TikTok dances; stay current.

As a tangible example, here's a survey that Pollfish's Troy Harrington, ran when Elon Musk publicly announced an early prototype of Tesla Optimus Robots. While the data says one thing at the launch date in Q4 2024, the public sentiment could be very different today. The survey is 'primary' to him, and as you are the onlooker, it becomes 'secondary' market research from your perspective.

By the way, you can click in to that survey dashboard here!

4. Filter by Relevant Metrics ⚡

You wouldn’t use a fitness tracker to gauge stock market trends—so don’t pick irrelevant KPIs. A company like Monster Energy in the Drinks industry targeting Red Bull fans might ask: “What factors influence your energy drink purchases?” If 67% say “flavor variety,” that’s your golden metric. Match the metric to the market question to avoid spinning your wheels.

5. Contextualize Global Data Locally 🌎

Secondary data often comes from global sources, but markets behave differently on the ground. Say Tesla wants insights for their Automotive market expansion in India, targeting “environmentally conscious millennials.” Localized data might show that 38% cite “charging station availability” as a barrier to adoption. Tailor global insights for local relevance.

6. Benchmark Against Competitors’ Strategies ⚖

Secondary research is a fantastic spy tool. If Patagonia is studying the Retail industry’s eco-conscious shoppers, they might uncover that 74% prefer brands using recycled materials. This insight can inspire Patagonia’s next campaign. Competitive benchmarks aren’t just numbers—they’re blueprints.

7. Evaluate Data Accessibility and Limitations ❌

Not all data is created equal. A company like Snapchat, navigating the Tech industry, might access free reports showing that 54% of teens use the app daily. However, the freebie may lack nuanced demographic splits. Always weigh data availability against what you’re not seeing.

8. Dive into Niche Publications 🔬

Don’t overlook industry-specific goldmines. For example, Nike could find specialized insights in niche sports magazines about “female marathon runners” preferring cushioned shoes (71%). Niche data often provides the granular details big reports skim over.

9. Use Secondary Data to Predict Trends 🎯

Data from the past can be a crystal ball for the future. Suppose LEGO in the Toy industry is targeting “parents of STEM-loving kids.” Question type: “What skills do you want your child’s toys to enhance?” Insight: 52% say “problem-solving.” Extrapolating data like this can help LEGO create a robotics line that fits the trend.

10. Validate Vendor Claims with Independent Data ✉

Vendor pitches can be enticing—but always verify. If Coca-Cola in the Drink industry considers a partnership, they might first check the vendor’s claims about “48% market penetration” with Nielsen reports. A little skepticism goes a long way.

11. Conduct Social Media Listening 🔊

Social chatter is secondary data in real-time. Imagine Chanel, studying the Fashion industry and targeting “fashion-forward Gen Z.” Analyzing Twitter trends might reveal that 29% rave about capsule wardrobes. Social listening tools like Brandwatch or Sprinklr can amplify insights.

12. Focus on Behavioral Over Intent Data 🏃

What people do trumps what they say. For instance, Apple in the Tech industry targeting “health-conscious professionals” might observe Fitbit sales trends showing 68% usage for step tracking over workouts. Secondary behavioral data paints a clearer picture than survey intent.

13. Check for Red Flags in Data Quality ⚠

Secondary data often hides skeletons. Say Lululemon wants insights into the Fitness industry’s yoga enthusiasts. If a source claims 85% practice hot yoga but samples only 500 participants from Arizona, tread carefully. Assess sample size, geographic relevance, and methodology before trusting results.

Always find the source. A good source should have clear methodology, and if it's a full service consumer panel, there should be awards and verifications, sort of like the ones we have below!

14. Map Insights to Buyer Personas 🔎

Secondary data must inform personas. Suppose Canon is diving into the Electronics industry targeting “pro-level photographers.” Their research might show 39% prefer mirrorless cameras. Matching these insights to persona goals helps refine marketing campaigns.

15. Analyze Competitive Ad Spending 💰

Where competitors spend reveals priorities. Consider Target, researching the Retail industry’s back-to-school shoppers. If Walmart’s ad spend spikes by 34% in July, it signals competition for that segment. Tools like Adbeat or SEMrush simplify ad spend analysis.

16. Assess Industry-Wide Challenges ⚖

Secondary research can highlight macro issues. For example, John Deere in the Agriculture sector might find that 51% of farmers cite “labor shortages” as their biggest hurdle. These insights provide opportunities for innovative product design.

17. Identify Gaps in Market Coverage 🌑

Sometimes, the absence of data is telling. If Samsung in the Tech industry finds no coverage on foldable phones for seniors, that’s a market to exploit. Look for under-researched areas and own them.

18. Validate Hypotheses with Hard Data 🔢

Secondary research is the litmus test for your hypotheses. Suppose Red Bull, in the Drinks industry, theorizes that Gen Z prefers smaller cans. Secondary sources showing 63% favor “portable beverage options” can confirm this.

19. Mine Government Databases 📚

Government reports are treasure troves of credible data. Imagine Ford researching Automotive safety regulations. The NHTSA might reveal that 44% of accidents involve distracted driving, shaping Ford’s tech integrations.

20. Factor in Economic Indicators 📊

Economic data influences industry trends. Suppose Airbnb in the Travel industry notices rising interest rates leading to 28% fewer bookings. These insights help anticipate shifts in customer behavior.

21. Leverage Academic Studies ⚙

Don’t ignore academia. For example, Adidas might use studies on “exercise psychology” to learn that 47% of gym-goers wear activity-specific shoes. Peer-reviewed research often has untapped gems.

22. Embrace Visual Data Analysis 🎨